|

|

|

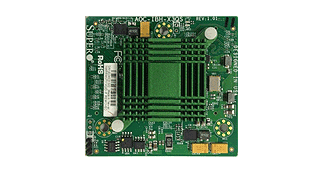

Single Port, Low Latency InfiniBand Adapter Cards For SuperBlade

AOC-IBH-X3QS This InfiniBand mezzanine card for the SuperBlade delivers low-latency and high-bandwidth for performance-driven server and storage clustering applications in Enterprise Data Centers, High-Performance Computing, and Embedded environments. Clustered data bases, parallelized applications, transactional services and high-performance embedded I/O applications will achieve significant performance improvements resulting in reduced completion time and lower cost per operation. AOC-IBH-X3QS simplifies network deployment by consolidating clustering, communications, storage, and management I/O and by providing enhanced performance in virtualized server environments. In addition to this outstanding InfiniBand capability, the AOC-IBH-X3QS can be configured alternatively as a 10-Gigabit Ethernet NIC when used with the Supermicro SBM-XEM-002M 10-Gigabit Pass-Through module or the SBM-XEM-X10SM 10Gbps Ethernet switch. |

|

| |

|

|

RoHS

|

|

| |

|

|

| User's Guide |

See Appendix A of the SuperBlade Network Modules User's Manual for Installation Instructions [ Download ] |

| Firmware |

Firmware [ Download ] |

|

|

|

|

|

- Single 40Gb/s InfiniBand port or 10Gb/s Ethernet port

- CPU offload of transport operations

- End-to-end QoS and congestion control

- Hardware-based I/O virtualization

- TCP/UDP/IP stateless offload

- Full support for Intel I/OAT

|

| |

|

|

- InfiniBand:

- Mellanox ConnectX3 IB FDR Chip

- Single 4X InfiniBand port

- 40Gb/s

- RDMA, Send/Receive semantics

- Hardware-based congestion

control

- Atomic operations

- Interface:

- SuperBlade Mezzanine Card

- Connectivity:

- Interoperable with InfiniBand

switches through SuperBlade

FDR InfiniBand Switch

(SBM-IBS-F3616M)

- Interoperable with 10 Gigabit

Ethernet switches through

SuperBlade 10G Ethernet Pass-

Through Module (SBM-XEM-002)

- Hardware-based I/O Virtualization:

- Address translation and

protection

- Multiple queues per virtual

machine

- Native OS performance

- Complimentary to Intel and AMD

I/OMMU

- CPU Offloads:

- TCP/UDP/IP stateless offload

- Intelligent interrupt coalescence

- Full support for Intel I/OAT

- Compliant to Microsoft RSS and

NetDMA

- Storage Support:

- TIO compliant data integrity field support

- Operating Systems/Distributions (InfiniBand):

- Novell, RedHat, Fedora and

others

- Microsoft Windows Server

- Operating Systems/Distributions (Ethernet):

- RedHat Linux

- Operating Conditions:

- Operating temperature: 0 to 55°C

|

| |

|

|

- Support all current blade servers except otherwise indicated. Check individual blade specs

|

|