您的 NVIDIA Blackwell 之旅从这里开始

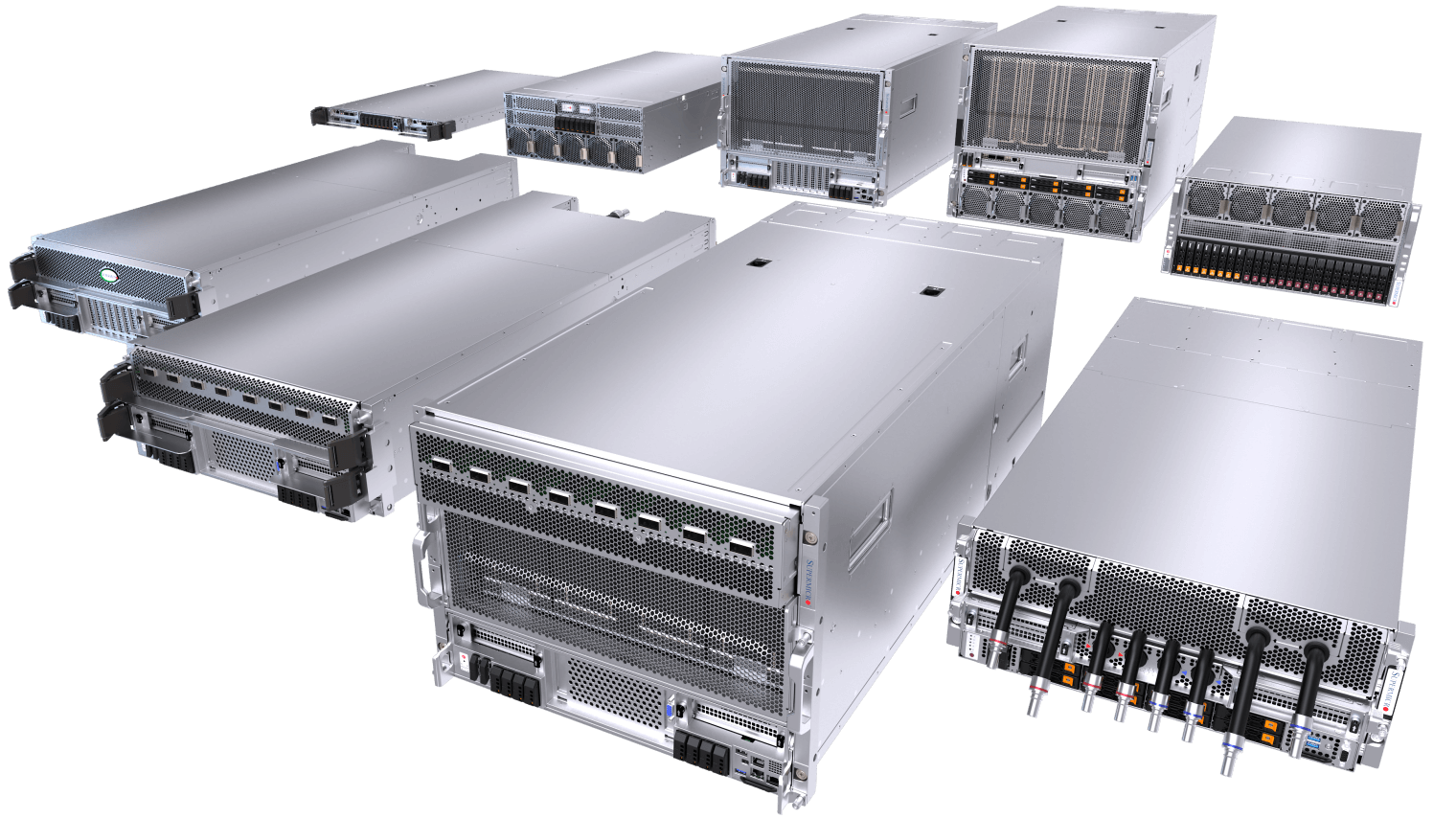

在这个 AI 的变革时刻,不断演进的扩展定律持续推动数据中心能力的极限,我们与 NVIDIA 紧密合作开发的最新 NVIDIA Blackwell 驱动解决方案,凭借下一代风冷和液冷架构,提供前所未有的计算性能、密度和效率。凭借我们可随时部署的 AI 数据中心构建模块解决方案,Supermicro 是您开启 NVIDIA Blackwell 之旅的首选合作伙伴,提供可持续的尖端解决方案,加速 AI 创新。

端到端人工智能数据中心构建模块解决方案优势

广泛的风冷和液冷系统,提供多种 CPU 选项、全套数据中心管理软件套件、交钥匙机架级集成、全套网络、布线和集群级 L12 验证、全球交付、支持和服务。

- 丰富的经验

- Supermicro 的 AI 数据中心构建模块解决方案为全球最大的液冷 AI 数据中心部署提供动力。

- 灵活的产品

- 风冷或水冷、GPU 优化、多种系统和机架外形、CPU、存储、网络选项。可根据您的需求进行优化。

- 液体冷却先锋

- 经验证、可扩展、即插即用的液冷解决方案,助力人工智能革命。专为英伟达™(NVIDIA®)Blackwell 架构设计。

- 快速上网时间

- 利用全球能力、世界一流的部署专业知识和一站式服务加速交付,快速将您的人工智能投入生产。

最紧凑的超大规模人工智能平台

NVIDIA HGX™ B300系统专为开放计算项目ORV3设计优化,单机架可容纳多达144个GPU。

Supermicro 的 2-OU 液冷 NVIDIA HGX B300 系统为超大规模部署提供无与伦比的 GPU 密度。采用 OCP ORV3 规范和先进的 DLC-2 技术构建,每个紧凑型 8-GPU 节点可安装在 21 英寸机架中,每个机架最多可支持 18 个节点和总计 144 个 GPU。该系统采用盲插歧管连接和模块化 GPU/CPU 托盘架构,可使每个 B300 GPU 保持高达 1,100W TDP,同时显著减少机架占用空间、功耗和散热成本。是需要最大性能密度和卓越可维护性的 AI 工厂的理想选择。

每GPU配备288 GB HBM3e内存

2-OU 液冷系统

适用于英伟达 HGX B300 8 图形处理器

单个标准化ORV3机架内最多可容纳144块NVIDIA BlackwellUltra

人工智能推理的Ultra 性能

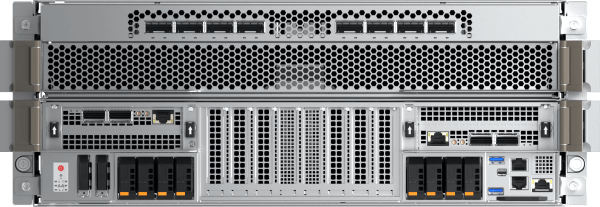

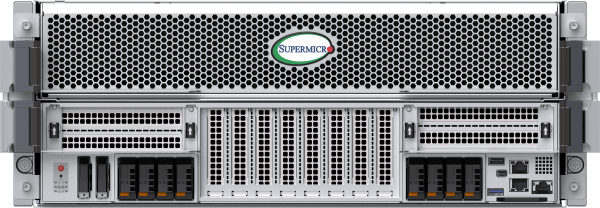

用于 NVIDIA HGX™ B300 的最先进的风冷和水冷架构

Supermicro NVIDIA HGX 平台为全球众多大型 AI 集群提供动力,为当今变革性的 AI 应用提供计算输出。现已配备 NVIDIA Blackwell Ultra,8U 风冷系统可最大限度地发挥八个 1100W HGX B300 GPU 的性能,总计 2.3TB HBM3e 内存。八个前置 OSFP 端口,集成 ConnectX®-8 SuperNIC,速率为 800 Gb/s,支持 NVIDIA Quantum-X800 InfiniBand 或 Spectrum-X™ 以太网集群的交钥匙部署。4U 液冷系统采用 DLC-2 技术,热量捕获率达 98%,实现数据中心 40% 的功耗节省。Supermicro 数据中心构建模块解决方案® (DCBBS) 和现场部署专业知识提供液冷、网络拓扑和布线、供电和热管理方面的完整解决方案,以加速 AI 工厂的上线时间。

4U液冷系统或8U风冷系统

适用于英伟达 HGX B300 8 图形处理器

前置I/O液冷系统,集成NVIDIAConnectX-8800 Gb/s网络接口

用于英伟达Ultra (NVIDIA®)BlackwellUltra HGX B300 8-GPU 的前置 I/O 风冷系统,集成英伟达™(NVIDIA®)ConnectX-8 网络功能

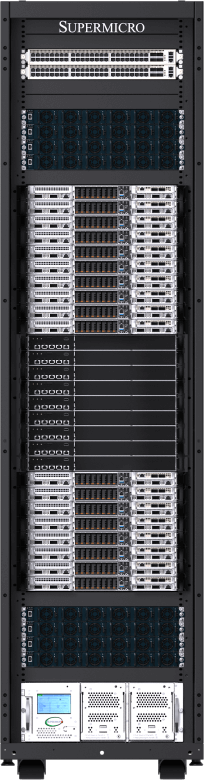

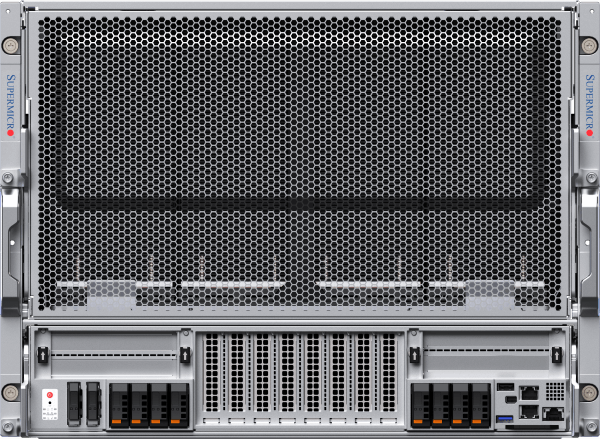

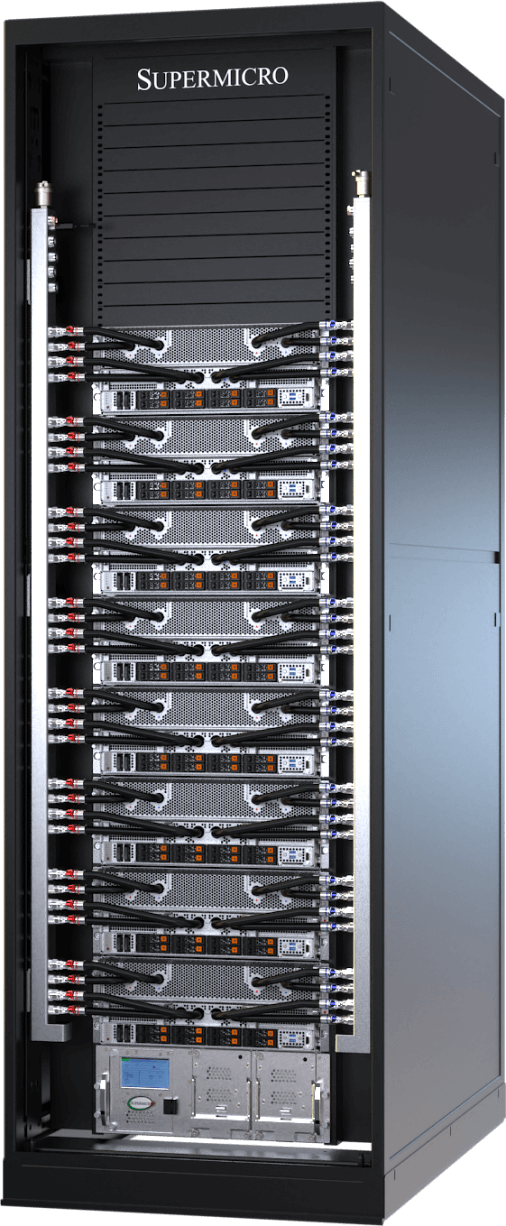

在机架中实现超大规模计算

针对 NVIDIA GB300 NVL72 的端对端水冷解决方案

Supermicro NVIDIA GB300 NVL72 能够应对从基础模型训练到大规模推理的 AI 计算需求。它将高 AI 性能与 Supermicro 的直接液冷技术相结合,可实现最大计算密度和效率。基于 NVIDIA Blackwell Ultra,单个机架集成了 72 个 NVIDIA B300 GPU,每个 GPU 配备 288GB HBM3e 内存。凭借 1.8TB/s 的 NVLink 互连,GB300 NVL72 可在单个节点中作为百亿亿次级超级计算机运行。升级后的网络将计算结构性能提高一倍,支持 800 Gb/s 的速度。Supermicro 的制造能力和端到端服务加速了液冷 AI 工厂的部署,并缩短了 GB300 NVL72 集群的上市时间。

英伟达 GB300 NVL72 和 GB200 NVL72

用于 NVIDIA GB300/GB200 Grace™ Blackwell 超级芯片

一个英伟达™(NVIDIA®)NVLink 网域中包含 72 个英伟达™(NVIDIA®)BlackwellUltra GPU。现在具有Ultra 性能和可扩展性

一个英伟达™(NVIDIA®)NVLink 网域中包含 72 个英伟达™(NVIDIA®)Blackwell GPU。人工智能计算架构的顶点。

进化型风冷系统

针对英伟达 HGX B200 8GPU 重新设计和优化的最畅销风冷系统

新型风冷 NVIDIA HGX B200 8-GPU 系统具有增强的散热架构、针对 CPU、内存、存储和网络的强大可配置性,以及从正面或背面改进的可维护性。最多可将 4 台新型 8U/10U 风冷系统安装并完全集成到单个机架中,在实现与上一代相同密度的同时,提供高达 15 倍的推理性能和 3 倍的训练性能。所有 Supermicro NVIDIA HGX B200 系统均配备 1:1 的 GPU-to-NIC 比率,支持 NVIDIA BlueField®-3 或 NVIDIA ConnectX®-7,以实现跨高性能计算结构的扩展。

8U前置输入/输出或 10U后置输入/输出风冷系统

适用于 NVIDIA HGX B2008-GPU

前置 I/O 风冷系统,具有更高的系统内存配置灵活性和冷通道可维护性

后置 I/O 风冷设计,适用于大型语言模型训练和大量推理

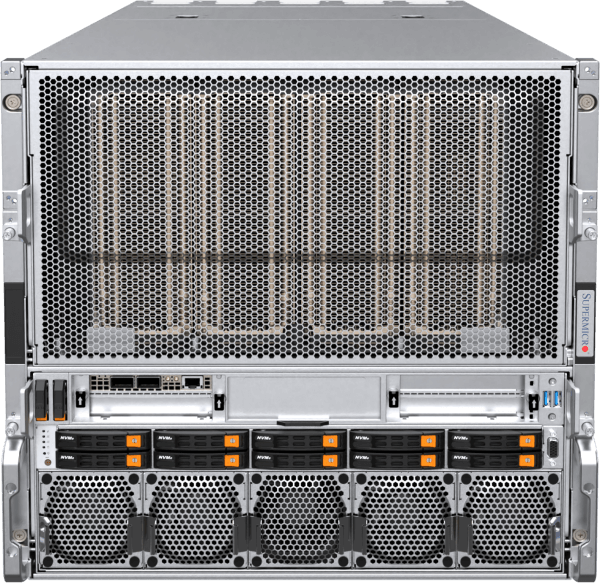

新一代水冷系统

单个机架中最多可安装 96 个 NVIDIA HGX™ B200 GPU,实现最高的可扩展性和效率

新型前置 I/O 液冷 4U NVIDIA HGX B200 8-GPU 系统采用 Supermicro 的 DLC-2 技术。直接液冷现在可捕获服务器组件(如 CPU、GPU、PCIe 交换机、DIMM、VRM 和 PSU)产生的高达 92% 的热量,从而可节省高达 40% 的数据中心功耗,并将噪音水平降至 50dB。新架构进一步提升了专为 NVIDIA HGX H100/H200 8-GPU 系统设计的前代产品的效率和可维护性。新型垂直冷却液分配歧管 (CDM) 的机架级设计提供 42U、48U 或 52U 配置,这意味着水平歧管不再占用宝贵的机架单元。这使得 42U 机架中可容纳 8 台配备 64 个 NVIDIA Blackwell GPU 的系统,52U 机架中可容纳多达 12 台配备 96 个 NVIDIA GPU 的系统。

4U前置输入/输出或后置输入/输出水冷系统

适用于 NVIDIA HGX B2008-GPU

前置 I/O DLC-2 液冷系统可为数据中心节省高达 40% 的电力,噪音水平低至 50 分贝

后置 I/O 液冷系统,专为实现最高计算密度和性能而设计

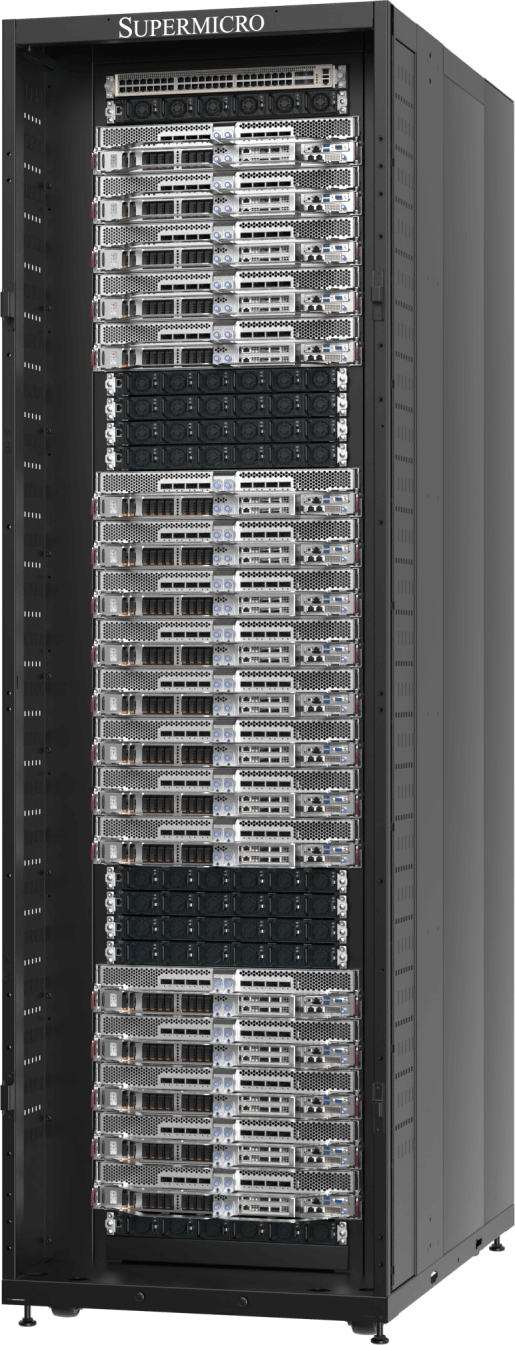

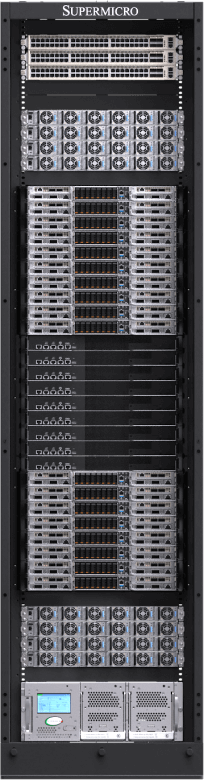

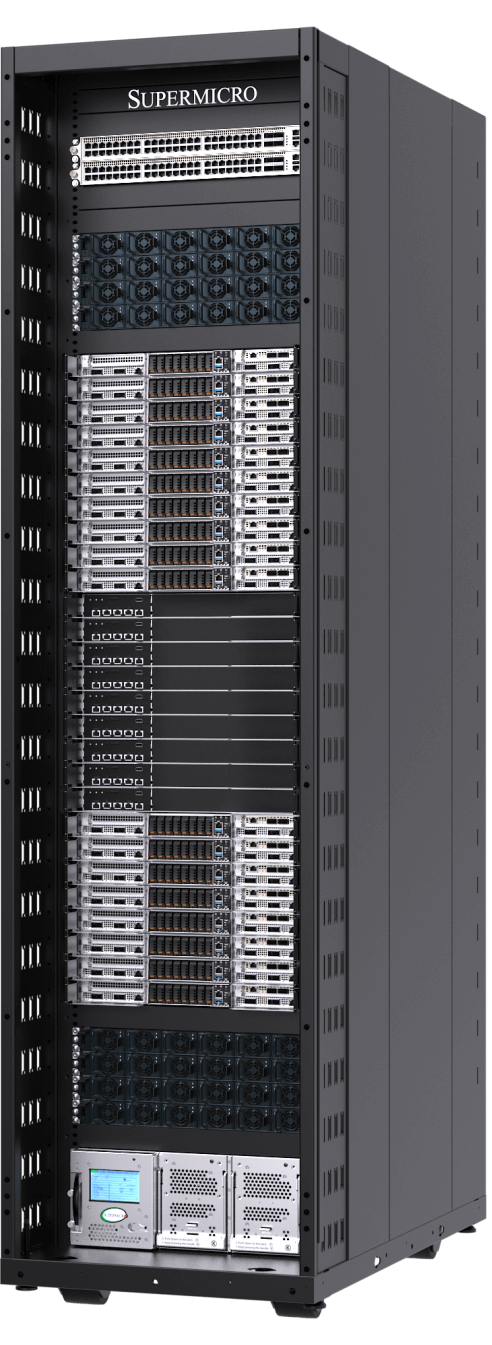

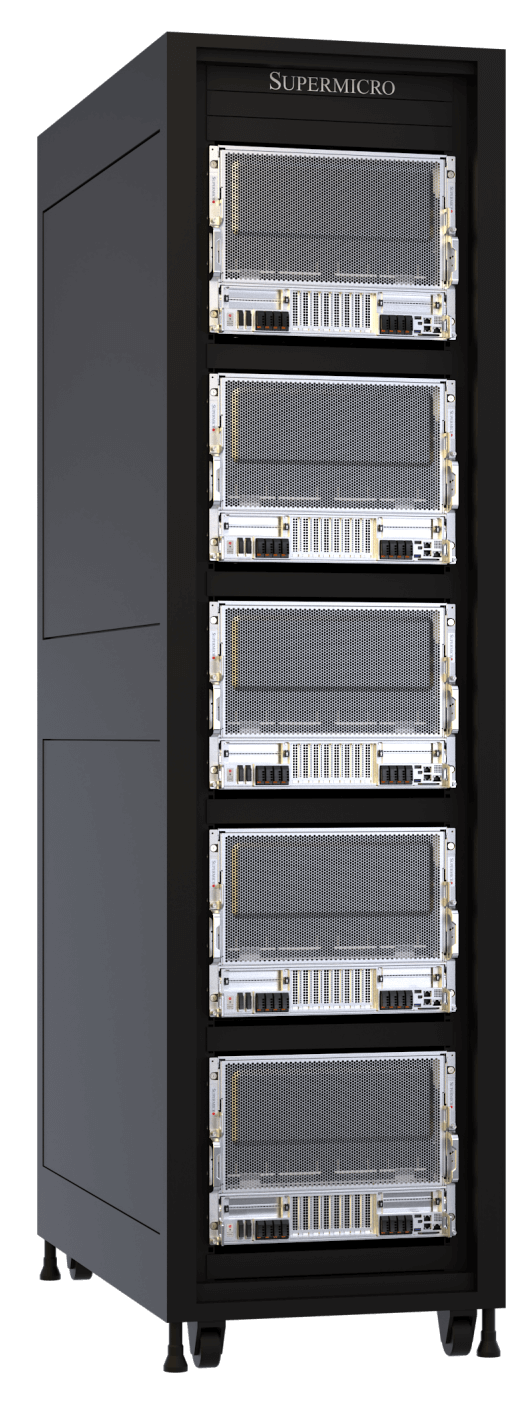

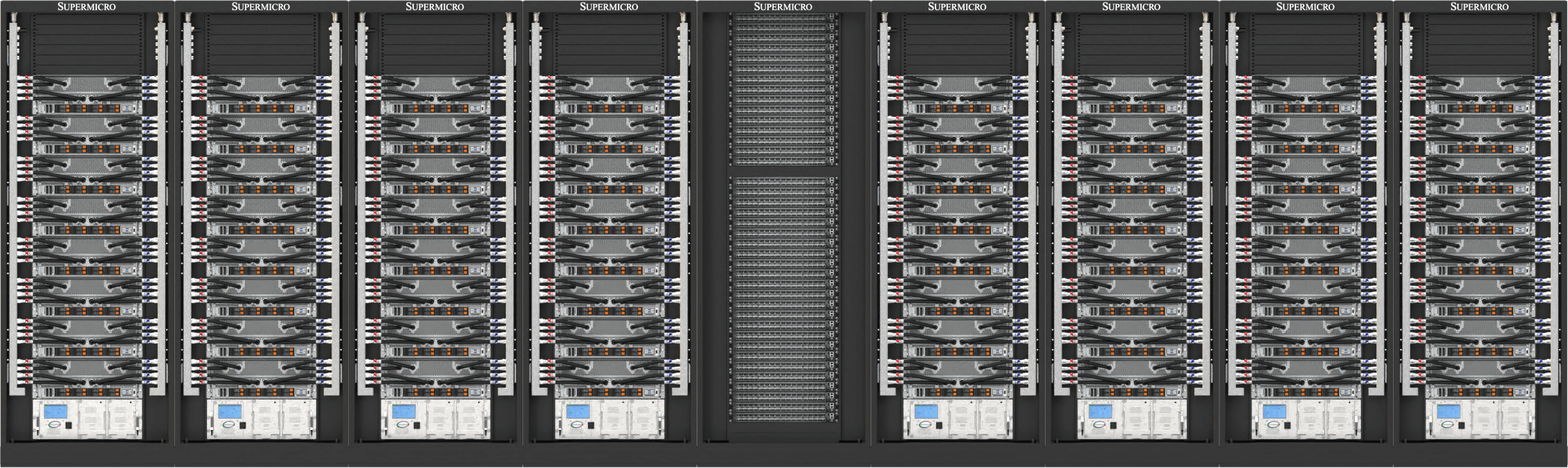

即插即用的可扩展装置可随时部署到 NVIDIA Blackwell 中

新型 SuperCluster 设计提供 42U、48U 或 52U 机架配置,适用于风冷或液冷数据中心,并在集中式机架中集成了 NVIDIA Quantum InfiniBand 或 NVIDIA Spectrum™ 网络。液冷 SuperCluster 可在五个 42U/48U 机架中实现一个无阻塞的 256-GPU 可扩展单元,或在九个 52U 机架中实现一个扩展的 768-GPU 可扩展单元,以满足最先进的 AI 数据中心部署需求。Supermicro 还为大型部署提供行内 CDU 选项,以及无需设施用水的液对空冷却机架解决方案。风冷 SuperCluster 设计沿袭了上一代经过验证的行业领先架构,可在九个 48U 机架中提供一个 256-GPU 可扩展单元。

NVIDIA Blackwell 端到端数据中心构建模块解决方案和部署服务

Supermicro 凭借全球制造规模,提供全面的“一站式”解决方案,包括数据中心级解决方案设计、液冷技术、交换、布线、完整的数据中心管理软件套件、L11 和 L12 解决方案验证、现场安装以及专业的支持和服务。Supermicro 在圣何塞、欧洲和亚洲设有生产基地,为液冷或风冷机架系统提供无与伦比的制造能力,确保及时交付、降低总拥有成本 (TCO) 并保持一致的质量。

Supermicro 的 NVIDIA Blackwell 解决方案通过在集中式机架中集成 NVIDIA Quantum InfiniBand 或 NVIDIA Spectrum™ 网络进行了优化,以实现最佳的基础设施扩展和 GPU 集群,从而在五个机架中实现一个无阻塞的 256-GPU 可扩展单元,或在九个机架中实现一个扩展的 768-GPU 可扩展单元。这种架构原生支持 NVIDIA 企业软件,并结合了 Supermicro 在部署全球最大液冷数据中心方面的专业知识,为当今最宏大的 AI 数据中心项目提供卓越的效率和无与伦比的上线时间。

英伟达量子 InfiniBand 和频谱以太网

从mainstream 企业服务器到高性能超级计算机,NVIDIA Quantum InfiniBand 和 Spectrum™ 网络技术激活了最具扩展性、速度最快且最安全的端到端网络。

英伟达超级网卡

Supermicro 处于采用 NVIDIA SuperNICs 的前沿:用于 InfiniBand 的 NVIDIA ConnectX 和用于以太网的 NVIDIA BlueField-3 SuperNIC。所有 Supermicro 的 NVIDIA HGX B200 系统都为每个 GPU 配备了 1:1 的网络连接,以实现 NVIDIA GPUDirect RDMA (InfiniBand) 或 RoCE (Ethernet),从而支持大规模并行 AI 计算。

英伟达™(NVIDIA®)人工智能企业软件

全面访问 NVIDIA 应用程序框架、API、SDK、工具包和优化器,并能够部署 AI 蓝图、NVIDIA NIM、RAG 和最新优化的 AI 基础模型。英伟达™(NVIDIA®)人工智能企业软件凭借企业级安全性、支持和稳定性,简化了生产级人工智能应用的开发和部署,确保从原型到生产的平稳过渡。