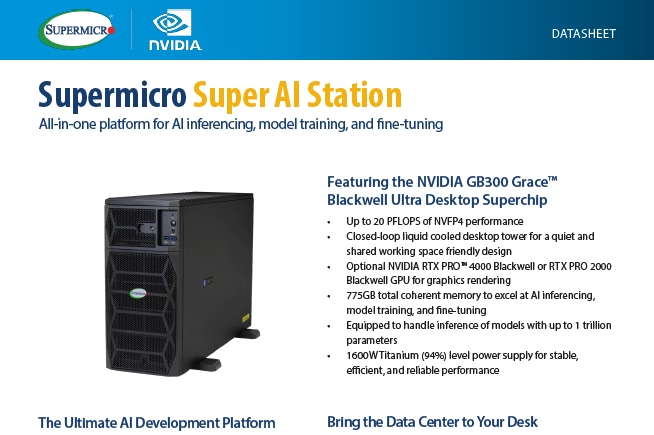

Supermicro Super AI Station

The new Supermicro Super AI Station for AI inferencing, model training, and fine-tuning – featuring the NVIDIA BG300 Grace™ Blackwell Ultra Desktop Superchip

The new Supermicro Super AI Station for AI inferencing, model training, and fine-tuning – featuring the NVIDIA BG300 Grace™ Blackwell Ultra Desktop Superchip

Powerful, flexible multi-workload acceleration from AI factory, to data center, to edge

Join Kitana and Rudy from the Technology Enablement team at Supermicro as they introduce the ideal solution for AI development, the Super AI Station. Supermicro’s ARS-511GD-NB-LCC, featuring the NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip, is a deskside, liquid‑cooled system that delivers data center–class AI performance right under your desk for training, fine‑tuning, and high‑throughput inference on large models.

The new Supermicro Super AI Station for AI inferencing, model training, and fine-tuning – featuring the NVIDIA BG300 Grace™ Blackwell Ultra Desktop Superchip

Powerful, flexible multi-workload acceleration from AI factory, to data center, to edge

Join Kitana and Rudy from the Technology Enablement team at Supermicro as they introduce the ideal solution for AI development, the Super AI Station. Supermicro’s ARS-511GD-NB-LCC, featuring the NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip, is a deskside, liquid‑cooled system that delivers data center–class AI performance right under your desk for training, fine‑tuning, and high‑throughput inference on large models.

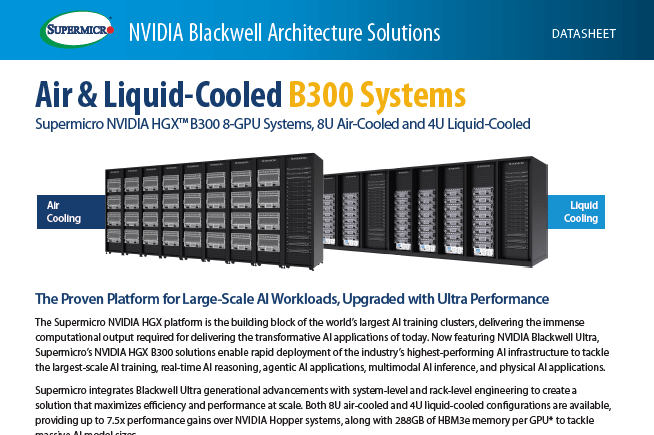

The Proven Platform for Large-Scale AI Workloads, Upgraded with Ultra Performance: Now featuring NVIDIA Blackwell Ultra, Supermicro’s NVIDIA HGX B300 solutions enable rapid deployment of the industry’s highest-performing AI infrastructure to tackle the largest-scale AI training, real-time AI reasoning, agentic AI applications, multimodal AI inference, and physical AI applications.

In this transformative moment of AI, where the evolving scaling laws continue to push the limits of data center capabilities, our latest NVIDIA Blackwell-powered solutions, developed through close collaboration with NVIDIA, offer unprecedented computational performance, density, and efficiency with the next generation air-cooled and liquid-cooled architecture. With our readily deployable AI Data Center Building Block solutions, Supermicro is your premier partner to start your NVIDIA Blackwell journey, providing sustainable, cutting-edge solutions that accelerate AI innovations.

AI factories from Supermicro and NVIDIA are complete, turnkey solutions simplifying the deployment of AI at any scale, first-to-market, and backed by rack-level integration delivering complete AI confidence.

Servers with NVIDIA Blackwell GPUs Accelerate a Variety of Applications

Accelerate NVIDIA Blackwell Deployment for a Diverse Range of AI Factory Environments. Supermicro’s Front I/O NVIDIA HGX B200 systems simplify the deployment, management, and maintenance of air or liquid-cooled AI infrastructure, allowing easy front I/O access, simplifying cabling, improving thermal efficiency and compute density, and reducing operational expenses (OPEX).

Supermicro’s SuperCluster, accelerated by the NVIDIA Blackwell Platform, empowers the next stage of AI, defined by new breakthroughs, including the evolution of scaling laws and the rise of reasoning models. These new SuperCluster offerings powered by the NVIDIA Blackwell Platform are available in 42U, 48U, or 52U configurations. The upgraded cold plates and 250kW coolant distribution unit (CDU) more than double the cooling capacity of the previous generation. The new vertical coolant distribution manifold (CDM) means that horizontal manifolds no longer occupy valuable rack space. NVIDIA Quantum InfiniBand or NVIDIA Spectrum™ networking in a centralized rack enables a non-blocking, 256-GPU scalable unit in five racks, or an extended 768-GPU scalable unit in nine racks.

Supermicro’s SuperCluster accelerated by the NVIDIA Blackwell Platform, empowers the next stage of AI, defined by new breakthroughs, including the evolution of scaling laws and the rise of reasoning models. Supermicro’s new air-cooled SuperCluster is composed of the new Supermicro NVIDIA HGX B200 8-GPU systems. Featuring a redesigned 10U chassis to accommodate the thermals of its leading-edge AI compute performance, it is designed to tackle heavy AI workloads of all types, from training to fine-tuning to inference. NVIDIA Quantum InfiniBand or NVIDIA Spectrum™ networking in a centralized rack enables a non-blocking, 256-GPU scalable unit in nine racks.

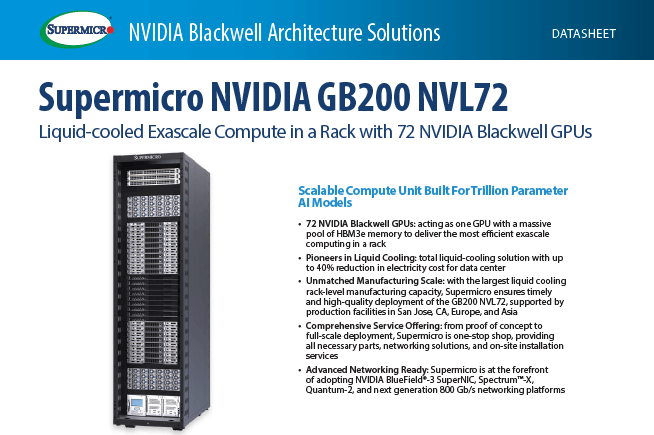

Liquid-cooled Exascale Compute in a Rack with 72 NVIDIA Blackwell GPUs