GPU Server Systems

Unrivaled GPU Systems: Deep Learning-Optimized Servers for the Modern Data Center

NVIDIA GB300 NVL72

Liquid Cooled Rack-Scale Solution with 72 NVIDIA B300 GPUs and 36 Grace CPUs

- GPU: 72 NVIDIA B300 GPUs via NVIDIA Grace Blackwell Superchips

- CPU: 36 NVIDIA Grace CPUs via NVIDIA Grace Blackwell Superchips

- Memory: Up to 21 TB HBM3e (GPU Memory), Up to 17 TB LPDDR5X (System Memory)

- Drives: up to 144 E1.S PCIe 5.0 drive bays

Liquid-Cooled Universal GPU Systems

Direct-to-chip liquid-cooled systems for high-density AI infrastructure at scale.

- GPU: NVIDIA HGX B300/B200/H200

- CPU: Intel® Xeon® or AMD EPYC™

- Memory: Up to 32 DIMMs, 9TB

- Drives: Up to 24 Hot-swap U.2 or 2.5" NVMe/SATA drives

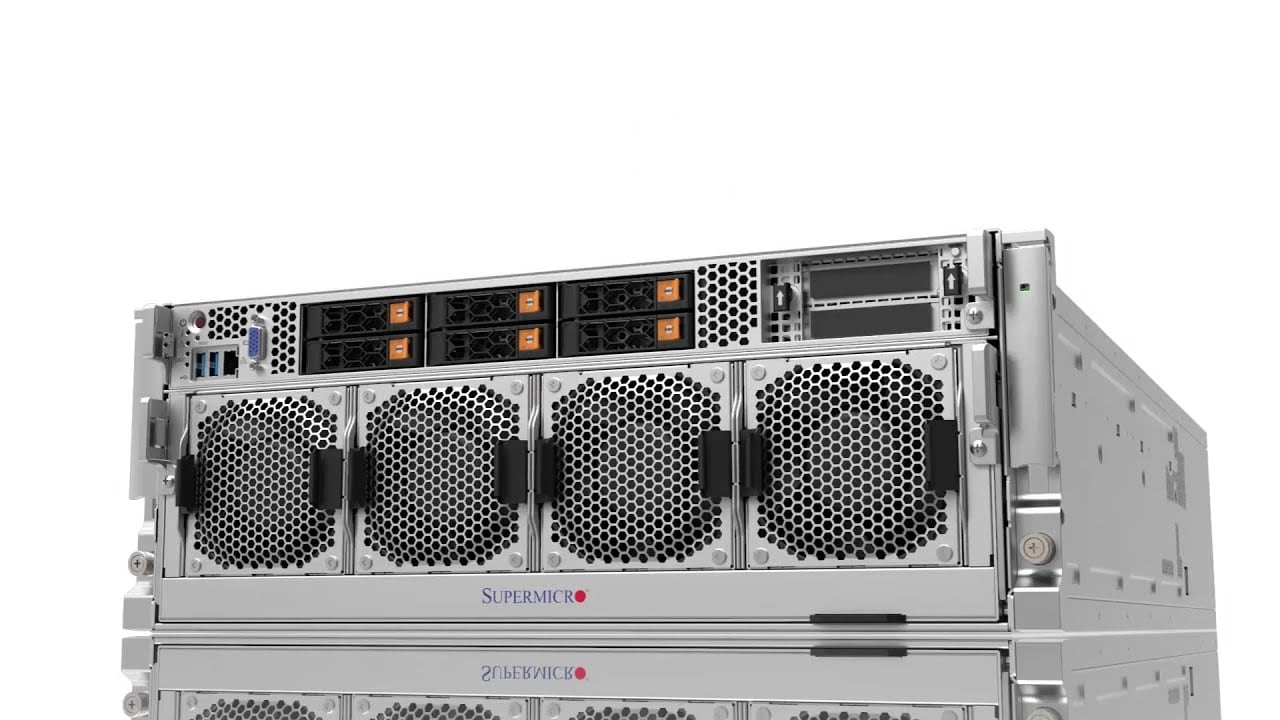

Universal GPU Systems

Modular Building Block Design, Future Proof Open-Standards Based Platform in 4U, 5U, 8U, or 10U for Large Scale AI training and HPC Applications

- GPU: NVIDIA HGX B300/B200/H200, AMD Instinct MI350 Series and MI325X/MI300X/MI250 OAM Accelerator, Intel Data Center GPU Max Series

- CPU: Intel® Xeon® or AMD EPYC™

- Memory: Up to 32 DIMMs, 9TB

- Drives: Up to 24 Hot-swap E1.S, U.2 or 2.5" NVMe/SATA drives

3U/4U/5U GPU Lines with PCIe 5.0

Maximum Acceleration and Flexibility for AI, Deep Learning and HPC Applications

- GPU: Up to 10 NVIDIA H100 PCIe GPUs, or up to 10 double-width PCIe GPUs

- CPU: Intel® Xeon® or AMD EPYC™

- Memory: Up to 32 DIMMs, 9TB or 12TB

- Drives: Up to 24 Hot-swap 2.5" SATA/SAS/NVMe

NVIDIA MGX™ Systems

Modular Building Block Platform Supporting Today's and Future GPUs, CPUs, and DPUs

- GPU: Up to 4 NVIDIA PCIe GPUs including NVIDIA RTX PRO™ 6000 Blackwell Server Edition, H200 NVL, H100 NVL, and L40S

- CPU: NVIDIA GH200 Grace Hopper™ Superchip, Grace™ CPU Superchip, or Intel® Xeon®

- Memory: Up to 960GB ingegrated LPDDR5X memory (Grace Hopper or Grace CPU Superchip) or 16 DIMMs, up to 4TB DRAM (Intel)

- Drives: Up to 8 E1.S + 4 M.2 drives

AMD APU Systems

Multi-processor system combining CPU and GPU, Designed for the Convergence of AI and HPC

- GPU: 4 AMD Instinct MI300A Accelerated Processing Unit (APU)

- CPU: AMD Instinct™ MI300A Accelerated Processing Unit (APU)

- Memory: Up to 512GB integrated HBM3 memory (4x 128GB)

- Drives: Up to 8 2.5" NVMe or Optional 24 2.5" SATA/SAS via storage add-on card + 2 M.2 drives

4U GPU Lines with PCIe 4.0

Flexible Design for AI and Graphically Intensive Workloads, Supporting Up to 10 GPUs

- GPU: NVIDIA HGX A100 8-GPU with NVLink, or up to 10 double-width PCIe GPUs

- CPU: Intel® Xeon® or AMD EPYC™

- Memory: Up to 32 DIMMs, 8TB DRAM or 12TB DRAM + PMem

- Drives: Up to 24 Hot-swap 2.5" SATA/SAS/NVMe

2U 2-Node Multi-GPU with PCIe 4.0

Dense and Resource-saving Multi-GPU Architecture for Cloud-Scale Data Center Applications

- GPU: Up to 3 double-width PCIe GPUs per node

- CPU: Intel® Xeon® or AMD EPYC™

- Memory: Up to 8 DIMMs, 2TB per node

- Drives: Up to 2 front hot-swap 2.5” U.2 per node

GPU Workstation

Flexible Solution for AI/Deep Learning Practitioners and High-end Graphics Professionals

- GPU: Up to 4 double-width PCIe GPUs

- CPU: Intel® Xeon®

- Memory: Up to 16 DIMMs, 6TB

- Drives: Up to 8 hot-swap 2.5” SATA/NVMe

Supermicro JumpStart Review: A Week With an NVIDIA HGX B200

Supermicro’s JumpStart program takes a very different approach to hardware evaluation. Instead of a short, scripted demo in a shared lab environment, JumpStart gives qualified customers free, time-boxed, bare-metal access to a catalog of real production servers.

Securing AI Workloads with Intel® TDX, NVIDIA Confidential Computing and Supermicro Servers with NVIDIA HGX™ B200 GPUs: A Foundation for Confidential AI at Scale

Read the White PaperSakura Internet Koukaryoku Cloud Services Accelerate AI Services

Read the Success StorySupermicro SYS-821GE-TNHR 8x NVIDIA H200 GPU Air Cooled AI Server

Today we continue our look at massive AI servers with a look at the Supermicro SYS-821GE-TNHR. When folks discuss Supermicro’s AI server prowess, this is one of the systems that is different in the market as an air-cooled NVIDIA server.

Supermicro with GAUDI 3 AI Delivers Scalable Performance for AI Requirements

Read the White PaperSupermicro and Intel GAUDI 3 Systems Advance Enterprise AI Infrastructure

Read the Product BriefSupermicro Grace System Delivers 4X Performance For ANSYS® LS-DYNA®

Read the Solution BriefApplied Digital Builds Massive AI Cloud With Supermicro GPU Servers

Read the Success StorySupermicro 4U AMD EPYC GPU Servers Offer AI Flexibility (AS-4125GS-TNRT)

Supermicro has long offered GPU servers in more shapes and sizes than we have time to discuss in this review. Today, we’re looking at their relatively new 4U air-cooled GPU server that supports two AMD EPYC 9004 Series CPUs, PCIe Gen5, and a choice of eight double-width or 12 single-width add-in GPU cards.

Supermicro And Nvidia Create Solutions To Accelerate CFD Simulations For Automotive And Aerospace Industries

Read the Solution BriefA Look at the Liquid Cooled Supermicro SYS-821GE-TNHR 8x NVIDIA H100 AI Server

Today we wanted to take a look at the liquid cooled Supermicro SYS-821GE-TNHR server. This is Supermicro’s 8x NVIDIA H100 system with a twist: it is liquid cooled for lower cooling costs and power consumption. Since we had the photos, we figured we would put this into a piece.

Options For Accessing PCIe GPUs in a High Performance Server Architecture

Read the Product BriefPetrobras Acquires Supermicro Servers Integrated by Atos To Reduce Costs and Increase Exploration Accuracy

Read the Success StorySupermicro And ProphetStor Maximize GPU Efficiency For Multitenant LLM Training

Read the Solution BriefSupermicro Servers Increase GPU Offerings For SEEWEB, Giving Demanding Customers Faster Results For AI and HPC Workloads

Read the Success Story3000W AMD Epyc Server Tear-Down, ft. Wendell of Level1Techs

We are looking at a server optimized for AI and machine learning. Supermicro has done a lot of work to cram as much as possible into 2114GT-DNR (2U2N) - a density optimized server. This is a really cool construction: there are two systems in this 2U chassis. The two redundant power supplies are 2,600W each and we'll see why we need so much power. It hosts six AMD MI210 Instinct GPUs and the dual Epyc processors. See the level of engineering Supermicro put into the design of this server.

NEC Advances AI Research With Advanced GPU Systems From Supermicro

Read the Success Story

Supermicro TECHTalk: High-Density AI Training/Deep Learning Server

Hybrid 2U2N GPU Workstation-Server Platform Supermicro SYS-210GP-DNR Hands-on

Today we are finishing our latest series by taking a look at the Supermicro SYS-210GP-DNR, a 2U, 2-node 6 GPU system that Patrick recently got some hands-on time with at Supermicro headquarters.

Supermicro SYS-220GQ-TNAR+ a NVIDIA Redstone 2U Server

Today we are looking at the Supermicro SYS-220GQ-TNAR+ that Patrick recently got some hands-on time with at Supermicro headquarters.

Unveiling GPU System Design Leap - Supermicro SC21 TECHTalk with IDC

Maximizing AI Development & Delivery with Virtualized NVIDIA A100 GPUs

Read the Solution Brief

Supermicro SuperMinute: 2U 2-Node Server

SuperMinute: 4U System with HGX A100 8-GPU