NVIDIA Blackwell Ultra Systems, Now Shipping

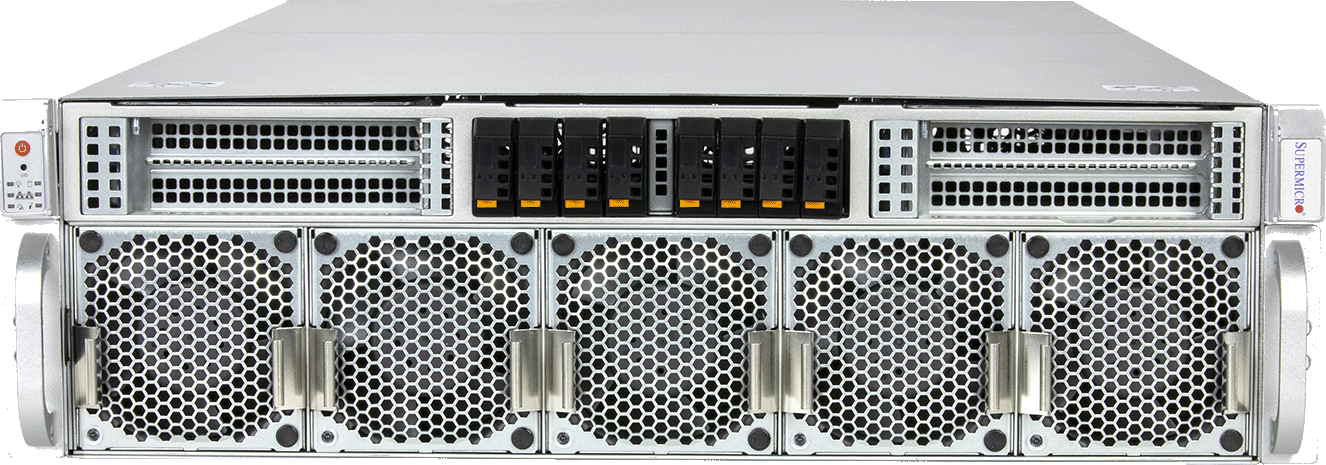

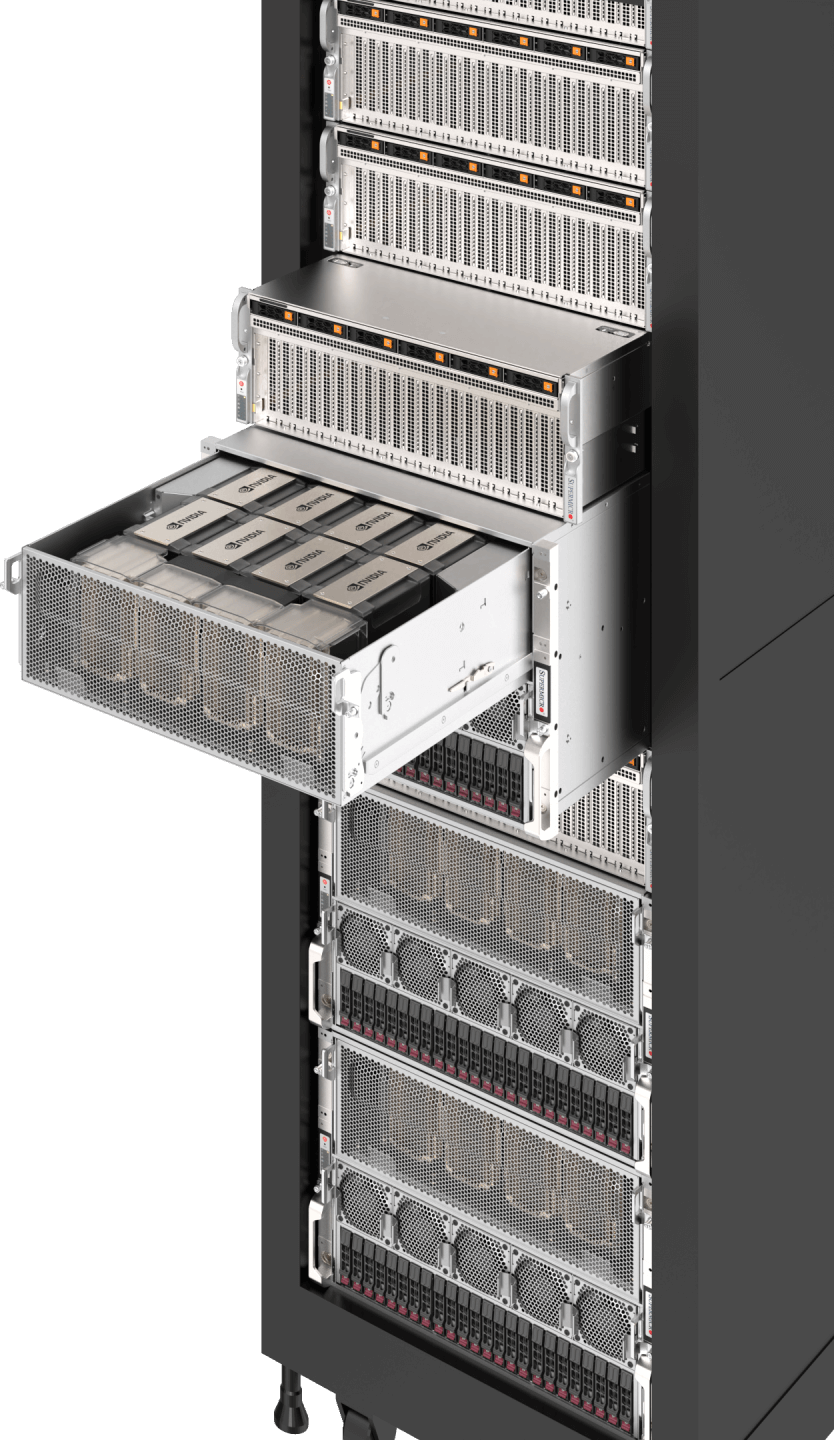

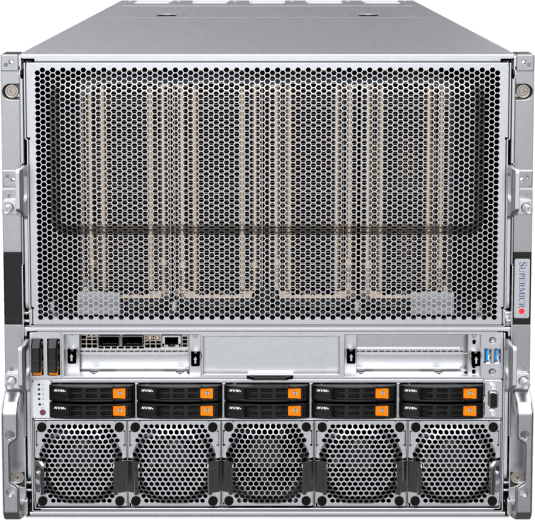

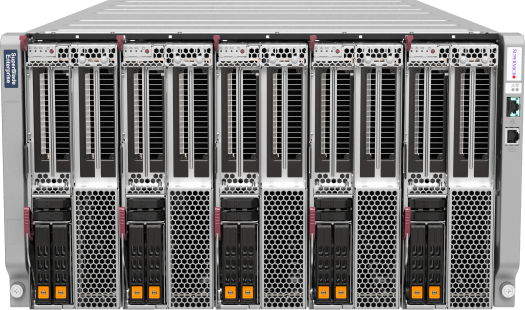

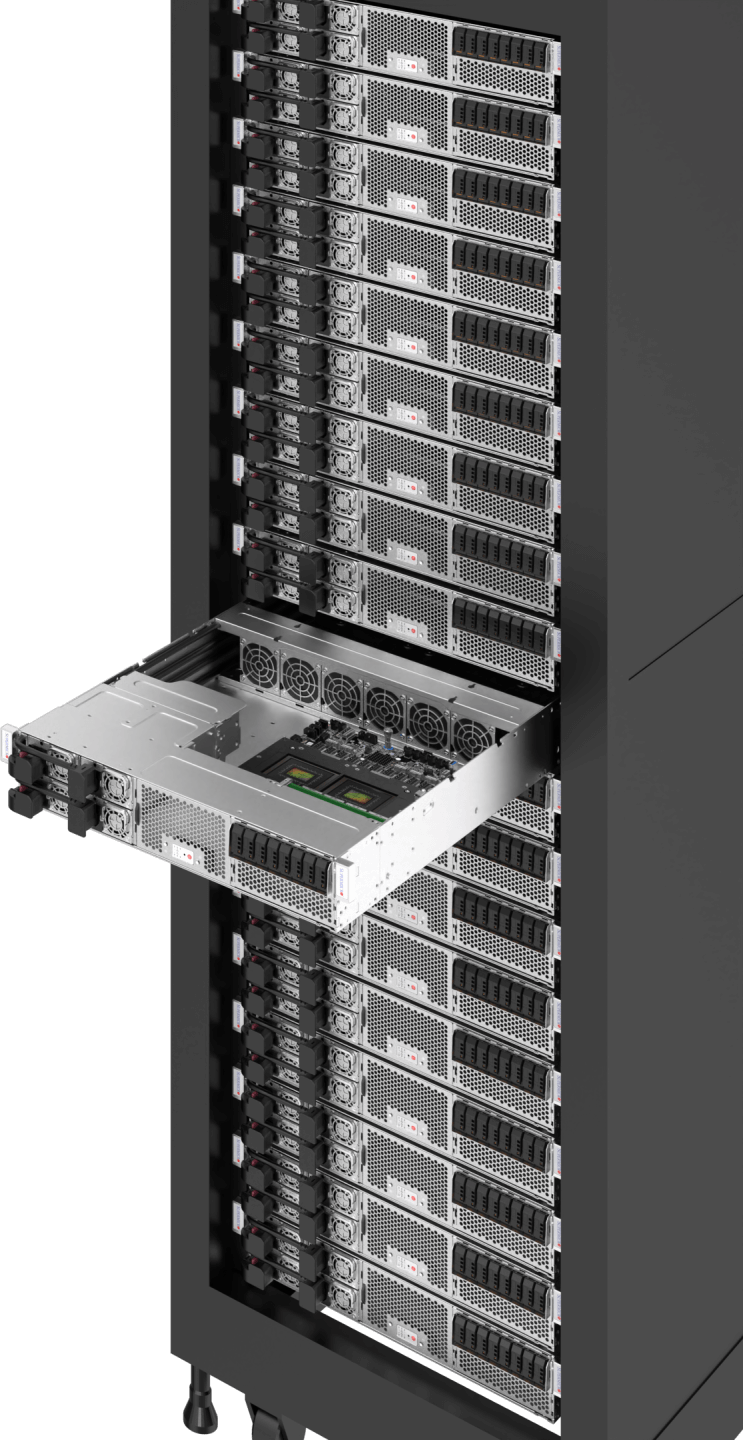

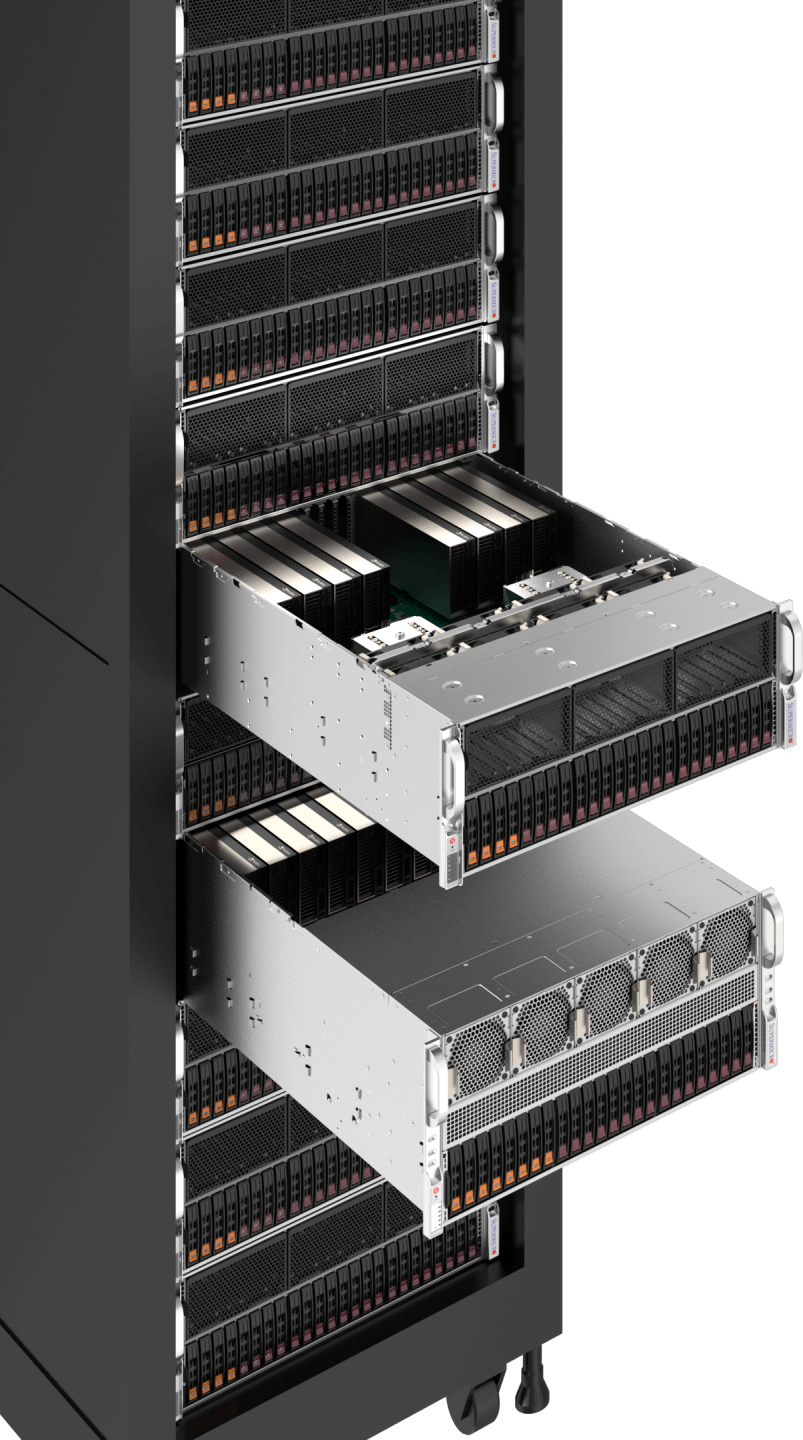

NVIDIA GB300 NVL72 SuperCluster

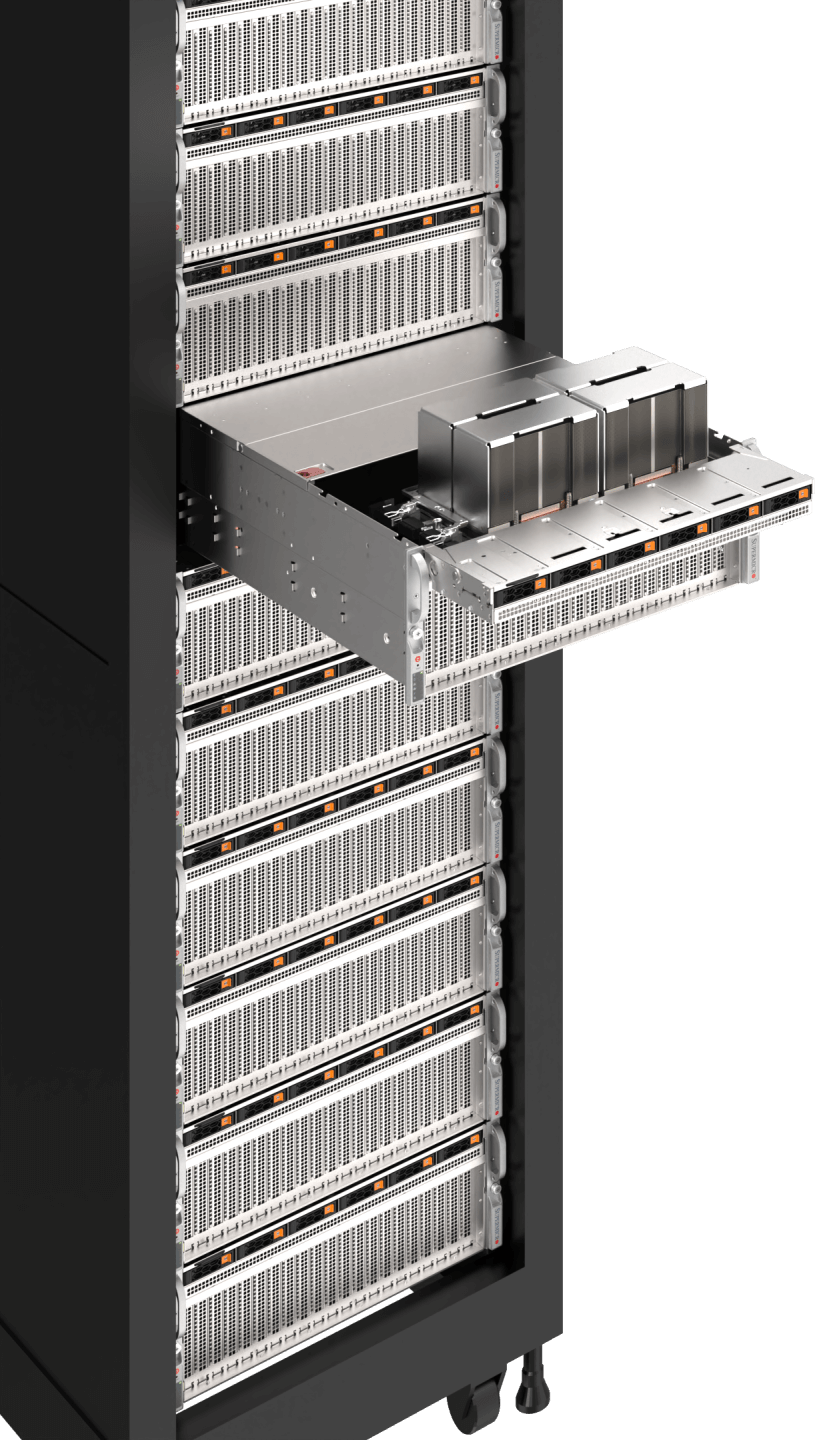

xAI Colossus

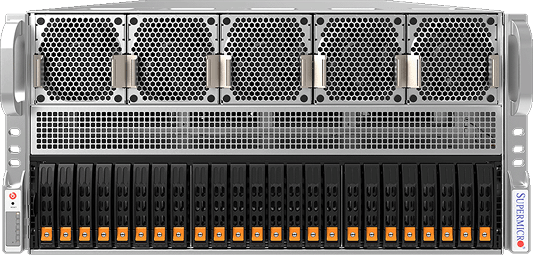

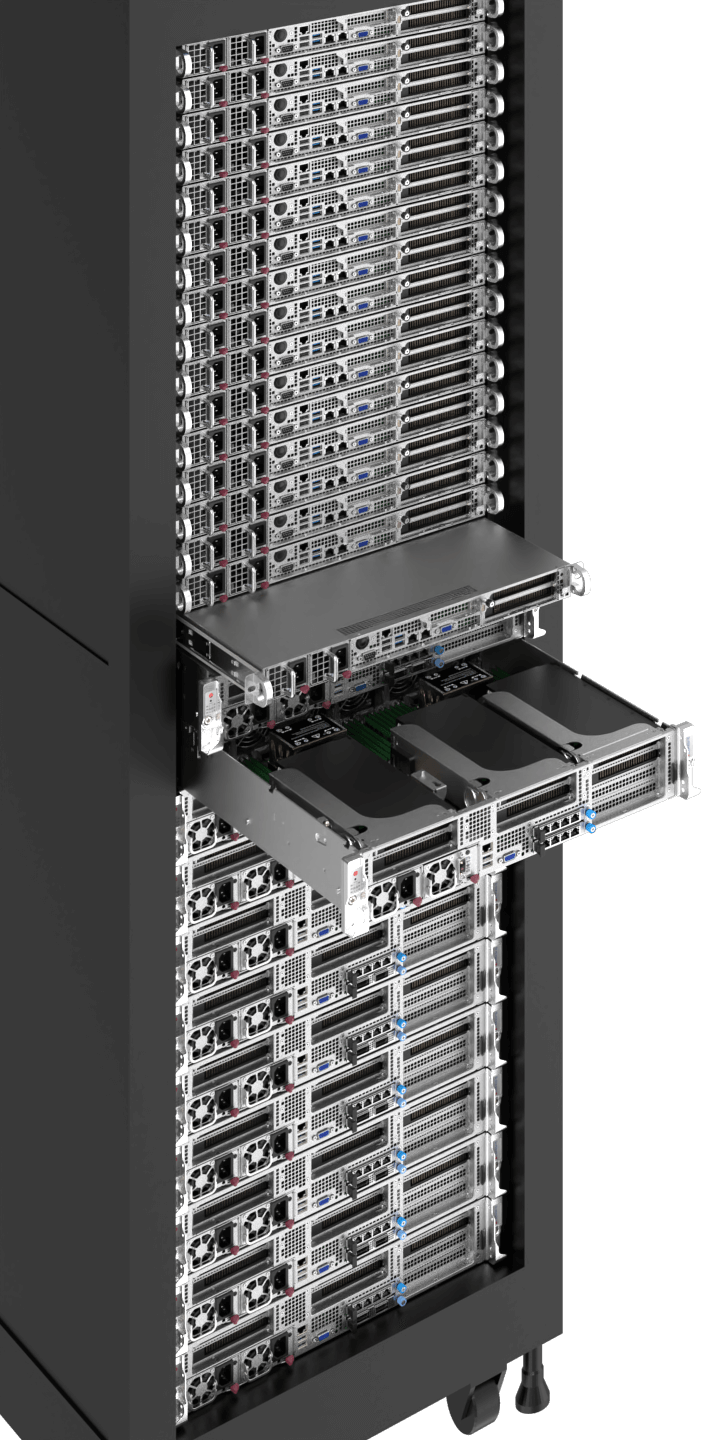

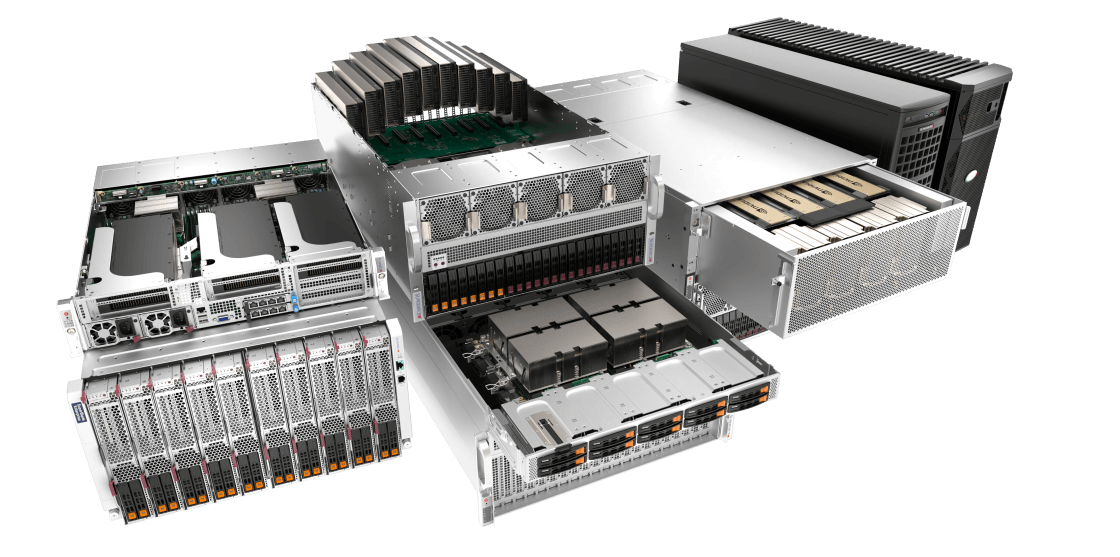

Unlock the full potential of AI with Supermicro’s cutting-edge AI-ready infrastructure solutions. From large-scale training to intelligent edge inferencing, our turn-key reference designs streamline and accelerate AI deployment. Empower your workloads with optimal performance and scalability while optimizing costs and minimizing environmental impact. Discover a world of possibilities with Supermicro’s diverse selection of AI workload-optimized solutions and accelerate every aspect of your business.

Large Scale AI Training & Inference

Large Language Models, Generative AI Training, Autonomous Driving, Robotics

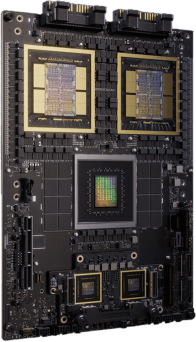

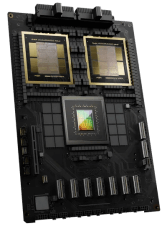

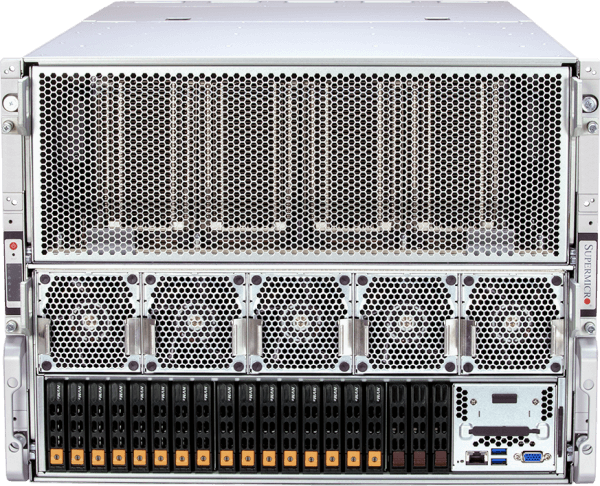

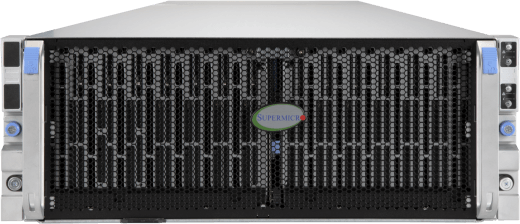

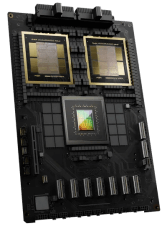

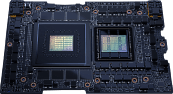

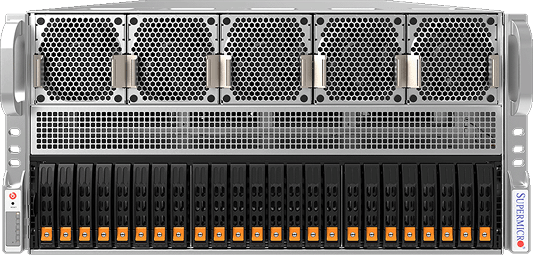

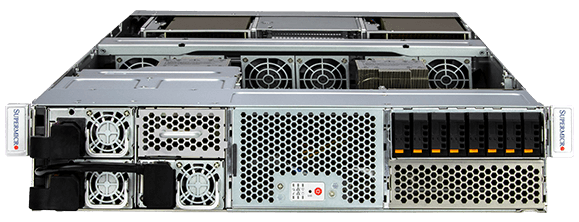

Large-scale AI training demands cutting-edge technologies to maximize parallel computing power of GPUs to handle billions if not trillions of AI model parameters to be trained with massive datasets. Leveraging NVIDIA’s HGX™ B300/B200, GB300/GB200 NVL72, and the fastest NVLink® & NVSwitch® GPU-GPU interconnects with up to 1.8TB/s bandwidth, and fastest 1:1 networking to each GPU for node clustering, these systems are optimized to train large language models from scratch and serve them to millions of concurrent users. Completing the stack with all-flash NVMe for fast AI data pipeline, Supermicro provides fully integrated racks with liquid cooling options to ensure fast deployment and a smooth AI training experience.

Workload Sizes

- Extra Large

- Large

- Medium

- Storage

Liquid-cooled NVIDIA HGX B300/B200 Systems and Racks

NVIDIA GB300 NVL72 with Supermicro Liquid Cooling

NVIDIA GB200 NVL72 with Supermicro Liquid Cooling

Air-cooled NVIDIA HGX B300/B200 Systems and Racks

8U System with NVIDIA HGX H200 8-GPU

Petabyte Scale NVMe Flash

Petabyte Scale HDD Storage

Resources

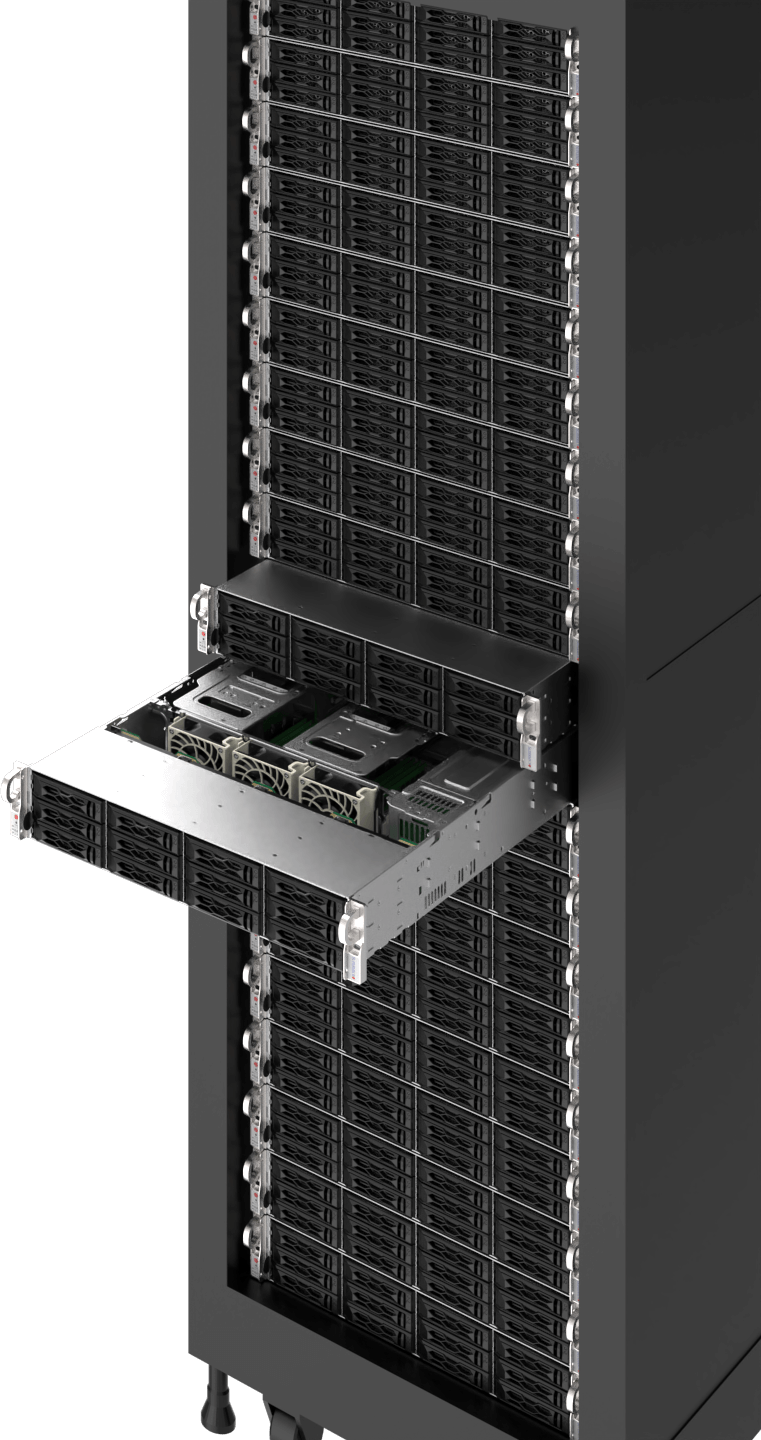

HPC/AI

Engineering Simulation, Scientific Research, Genomic Sequencing, Drug Discovery

Accelerating time to discovery for scientists, researchers and engineers, more and more HPC workloads are augmenting machine learning algorithms and GPU-accelerated parallel computing to achieve faster results. Many of the world’s fastest supercomputing clusters are now taking advantage of GPUs and the power of AI.

HPC workloads typically require data-intensive simulations and analytics with massive datasets and precision requirements. GPUs such as NVIDIA’s H100/H200 provide unprecedented double-precision performance, delivering 60 teraflops per GPU, and Supermicro’s highly flexible HPC platforms allow high GPU counts and CPU counts in a variety of dense form factors with rack scale integration and liquid cooling.

Workload Sizes

- Large

- Medium

8U/10 System with NVIDIA HGX B200 8-GPU

NVIDIA GB200 NVL4

6U/8U SuperBlade®

3U/4U/5U 8-10 GPU PCIe

1U Grace Hopper System

Resources

Enterprise AI Inference & Training

Generative AI Inference, AI-enabled Services/Applications, Chatbots, Recommender System, Business Automation

The rise of generative AI has been recognized as the next frontier for various industries, from tech to banking and media. The race to adopt AI has begun as a source to breed innovation, significantly boost productivity, streamline operations, make data-driven decisions, and improve customer experience.

Whether it is AI-assisted applications and business models, intelligent human-like chatbots for customer service, or AI to co-pilot code generation and content creation, enterprises can leverage open frameworks, libraries, pre-trained AI models, and fine-tune them for unique use cases with their own dataset. As the enterprise adopts AI infrastructure, Supermicro’s variety of GPU-optimized systems provide open modular architecture, vendor flexibility, and easy deployment and upgrade paths for rapidly-evolving technologies.

Workload Sizes

- Extra Large

- Large

- Medium

3U/4U/5U 8-10 GPU PCIe

6U SuperBlade®

2U MGX System

2U Grace MGX System

Resources

Visualization & Design

Real-Time Collaboration, 3D Design, Game Development

Increased fidelity of 3D graphics and AI-enabled applications by modern GPUs is accelerating industrial digitization, transforming product development and design processes, manufacturing, and content creation with true-to-reality 3D simulations to achieve new heights of quality, infinite iterations at no opportunity costs, and faster time-to-market.

Build virtual production infrastructure at scale to accelerate industrial digitalization through Supermicro’s fully-integrated solutions, including the 4U/5U 8-10 GPU systems, an NVIDIA OVX™ reference architecture, optimized for NVIDIA Omniverse Enterprise with Universal Scene Description (USD) connectors, and NVIDIA-certified rackmount servers and multi-GPU workstations.

Workload Sizes

- Large

- Medium

Resources

Content Delivery & Virtualization

Content Delivery Networks (CDNs), Transcoding, Compression, Cloud Gaming/Streaming

Video delivery workloads continue to make up a significant portion of current Internet traffic today. As streaming service providers increasingly offer content in 4K and even 8K, or cloud gaming in a higher refresh rate, GPU acceleration with media engines is a must to enable multi-fold throughput performance for streaming pipelines while reducing the amount of data required with better visual fidelity, thanks to the latest technologies such as AV1 encoding and decoding.

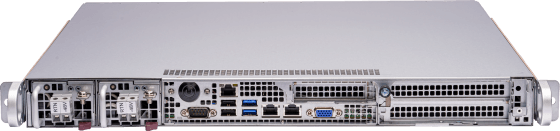

Supermicro’s multi-node and multi-GPU systems, such as the 2U 4-Node BigTwin® system meet the stringent requirements of modern video delivery, each node supporting the NVIDIA L4 GPU with the ability to feature plenty of PCIe Gen5 storage and networking speed to drive the demanding data pipeline for content delivery networks.

Workload Sizes

- Large

- Medium

- Small

Resources

Edge AI

Edge Video Transcoding, Edge Inference, Edge Training

Across industries, businesses whose employees and customers engage at edge locations – in cities, factories, retail stores, hospitals, and many more – are increasingly investing in deploying AI at the edge. By processing data and utilizing AI and ML algorithms at the edge, businesses overcome bandwidth and latency limitations, enabling real-time analytics for timely decision making, predictive care and personalized services, and streamlined business operations.

Purpose-built, environment-optimized Supermicro Edge AI servers with various compact form factors deliver the performance needed for low-latency, open architecture with pre-integrated components, diverse hardware and software stack compatibility, and privacy and security featuresets required for complex edge deployments out of the box.

Workload Sizes

- Extra Large

- Large

- Medium

- Small

Resources

Broadest Portfolio of AI-Ready Systems

Deploy NVIDIA Omniverse™ at Scale

COMPUTEX 2024 CEO Keynote