Supermicro

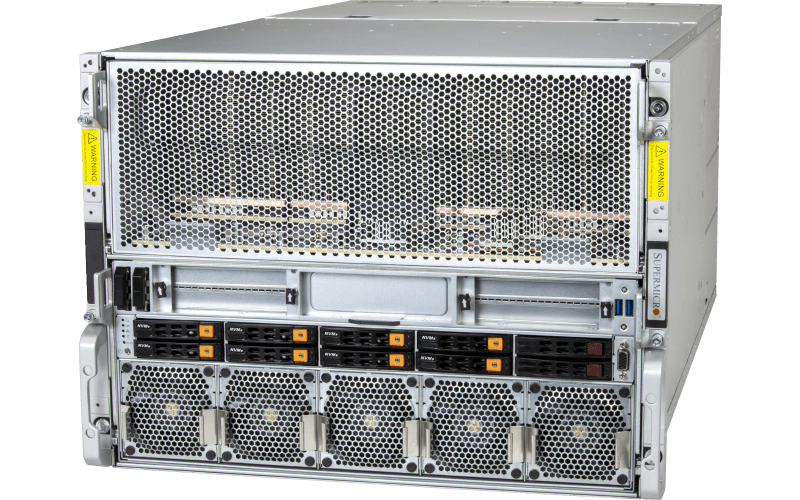

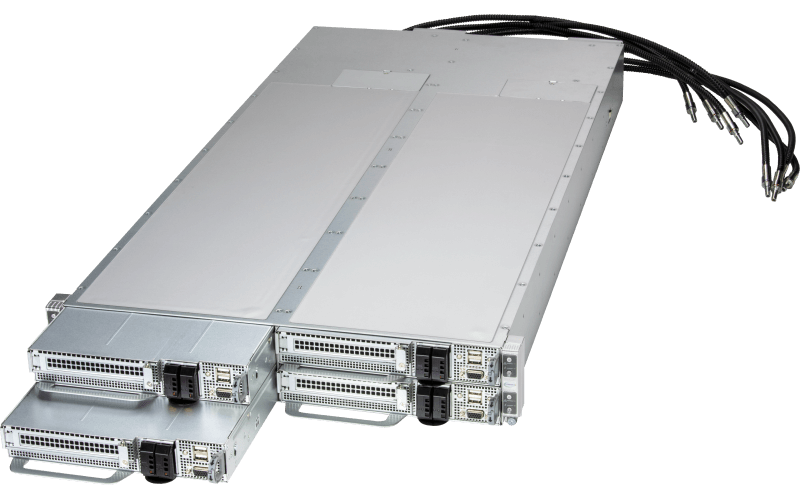

Validated AMD GPU Systems

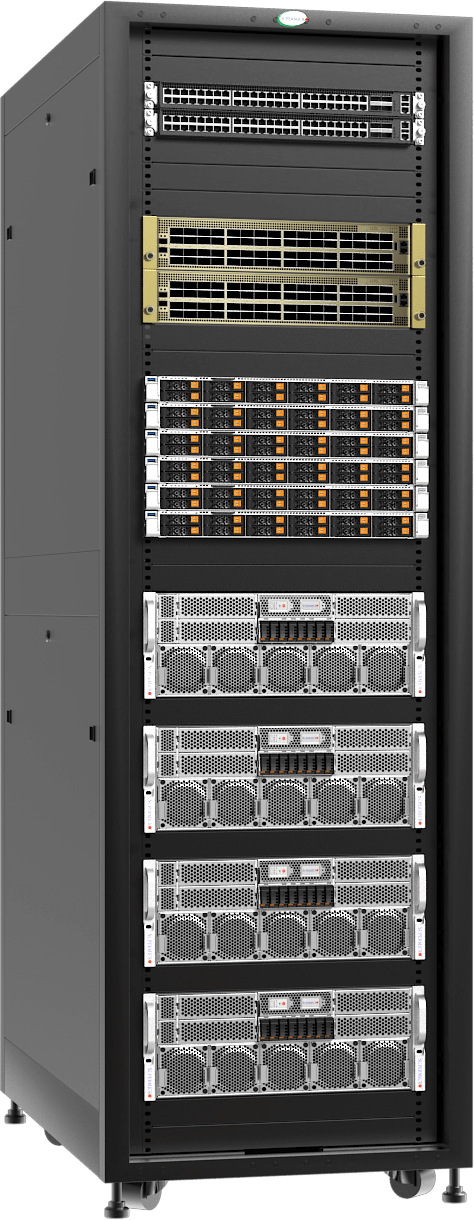

Rack-Scale Integration, Testing, and Validation before Shipping

Cluster-scale Deployment, Services, and Support

Storage and Networking Integration

AMD

AMD EPYC™ CPUs

AMD Instinct™ GPUs

AMD Pensando™ Networking

AMD Enterprise Software

Combining Supermicro’s rack-scale integration with AMD datacenter products provides a turnkey infrastructure specifically engineered for the intensive demands of a modern AI factory. By leveraging Supermicro’s liquid-cooling expertise and pre-validated L11 rack solutions, enterprises can deploy various sized clusters of MI350 series accelerators with optimal thermal efficiency and minimal onsite configuration. This collaborative ecosystem significantly accelerates time-to-market, as Supermicro performs pre-validation and qualification of the entire stack before delivery. Ultimately, this partnership reduces the complexity of scaling generative AI workloads, allowing organizations to transition from delivery to full-scale model training in record time.

AMD Instinct

GPUs set the standard for generative AI and high-performance computing by delivering industry-leading memory capacity and bandwidth—headlined by the MI355X’s 288GB of HBM3E and 8TB/s throughput—enabling seamless execution of the world’s largest language models on fewer nodes.

AMD Open Software Stack

ROCm software stack enable customers an open, end-to-end ecosystem for leading frameworks and libraries and along with the enterprise suite, simplify the AI lifecycle with optimized microservices and solution blueprints.

AMD EPYC

Processors deliver leadership performance by combining industry-high core counts of up to 192 “Zen 5” cores with superior memory bandwidth, providing a highly efficient foundation for end-to-end AI workloads.

AMD Pensando

AI NICs maximize AI cluster efficiency by utilizing a fully programmable P4 pipeline and Ultra Ethernet Consortium (UEC)-ready features—like intelligent packet spraying and path-aware congestion control—to reduce job completion times by up to 20% compared to traditional RoCEv2 solutions.

Storage

Supermicro and AMD work with leading storage vendors to create a full stack solution that can be seamlessly connected to the validated AI Platform solutions.

| Supermicro L11 BOM SKU # | SRS-48UAC-MI350-8U4N-R0 | SRS-48UAC-MI355-10U4N-R0 | SRS-48ULC-MI355-4U8N-R0 | SRS-52ULC-MI355-4U12N-R0 |

|---|---|---|---|---|

| Supermicro Server | AS -8126GS-TNMR | AS -A126GS-TNMR | AS -4126GS-NMR-LCC | AS -4126GS-NMR-LCC |

| Server Cooling | Air | Air | Liquid | Liquid |

| GPU Family | MI350X | MI355X | MI355X | MI355X |

| Server Cluster Size (S, M, L) | 4, 16, 64 | 4, 16, 64 | 4, 32, 64 | 8, 48, 128 |

| Number of Instinct GPUs | 32, 128, 512 | 32, 128, 512 | 32, 256, 512 | 64, 384, 1024 |

| Instinct GPU Model | MI350X AC | MI355X AC | MI355X DLC | MI355X DLC |

| Number of Pensando AI NICs | 40, 160, 640 | 40, 160, 640 | 40, 320, 640 | 80, 480, 1280 |

| Rack Power (for Middle Cluster Size) | 48.47 kW | 63.61 kW | 120.36 kW | 178.71 kW |