Accelerated Building Blocks with Intel GPUs

Supermicro

Built to Accelerate

For Cloud Scale AI Training and Inference

Demand for high-performance AI/Deep Learning (DL) training compute has doubled in size every 3.5 months since 2013 (according to OpenAI) and is accelerating with the growing size of data sets and the number of applications and services based on large language models (LLMs), computer vision, recommendation systems, and more.

With the increased demand for greater training and inference performance, throughput, and capacity, the industry needs purpose-built systems that offer increased efficiency, lower cost, ease of implementation, flexibility to enable customization, and scaling of AI systems. AI has become an essential technology for diverse areas such as copilots, virtual assistants, manufacturing automation, autonomous vehicle operations, and medical imaging, to name a few. Supermicro has partnered with Intel to provide cloud scale system and rack design with Intel Gaudi AI Accelerators.

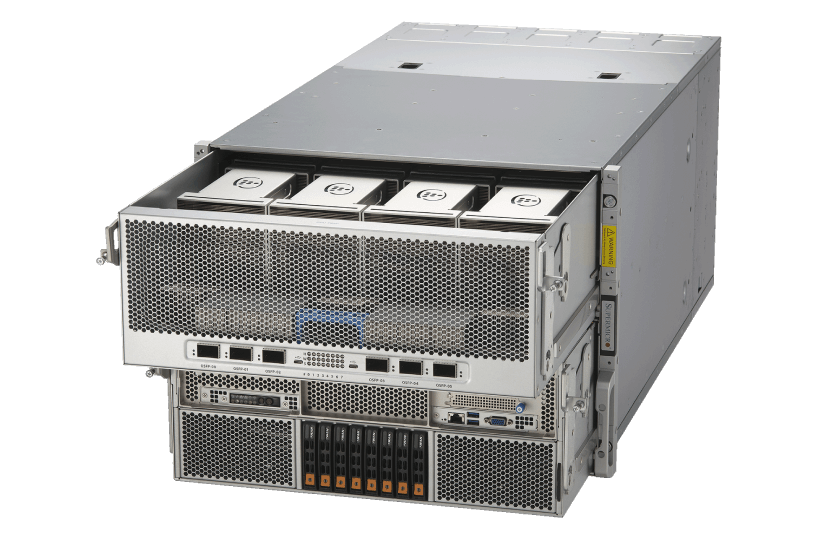

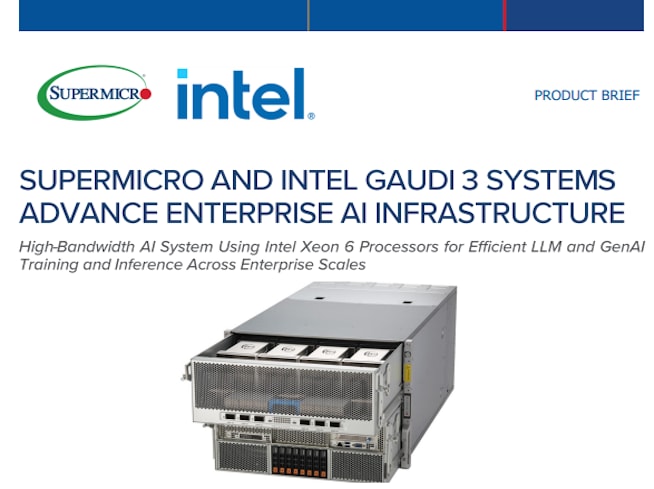

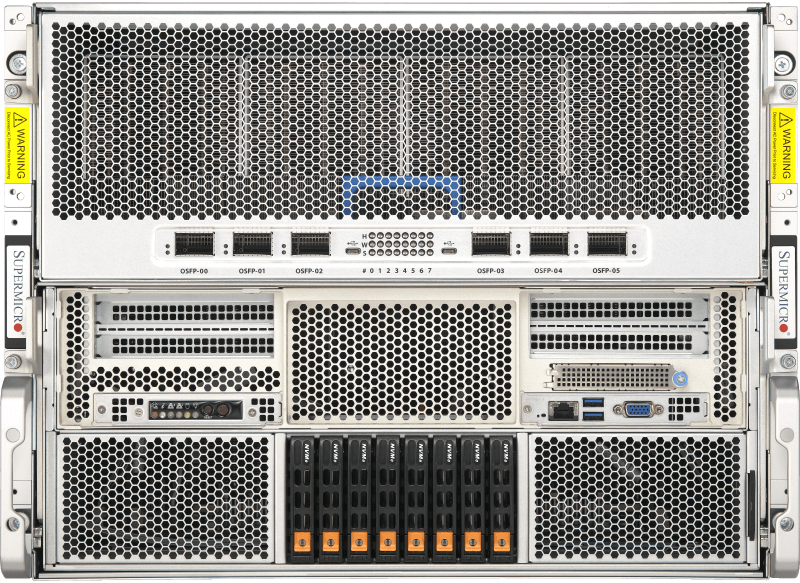

New Supermicro X14 Gaudi® 3 AI Training and Inference Platform

Bringing choice to the enterprise AI market, the new Supermicro X14 AI training platform is built on the third generation Intel® Gaudi 3 accelerators, designed to further increase the efficiency of large-scale AI model training and AI inferencing. Available in both air-cooled and liquid-cooled configurations, Supermicro's X14 Gaudi 3 solution easily scales to meet a wide range of AI workload requirements.

- GPU: 8 Gaudi 3 HL-325L (air-cooled) or HL-335 (liquid-cooled) accelerators on OAM 2.0 baseboard

- CPU: Dual Intel® Xeon® 6 processors

- Memory: 24 DIMMs - up to 6TB memory in 1DPC

- Drives: Up to 8 hot-swap PCIe 5.0 NVMe

- Power Supplies: 8 3000W high efficiency fully redundant (4+4) Titanium Level

- Networking: 6 on-board OSFP 800GbE ports for scale-out

- Expansion Slots: 2 PCIe 5.0 x16 (FHHL) + 2 PCIe 5.0 x8 (FHHL)

- Workloads: AI Training and Inference

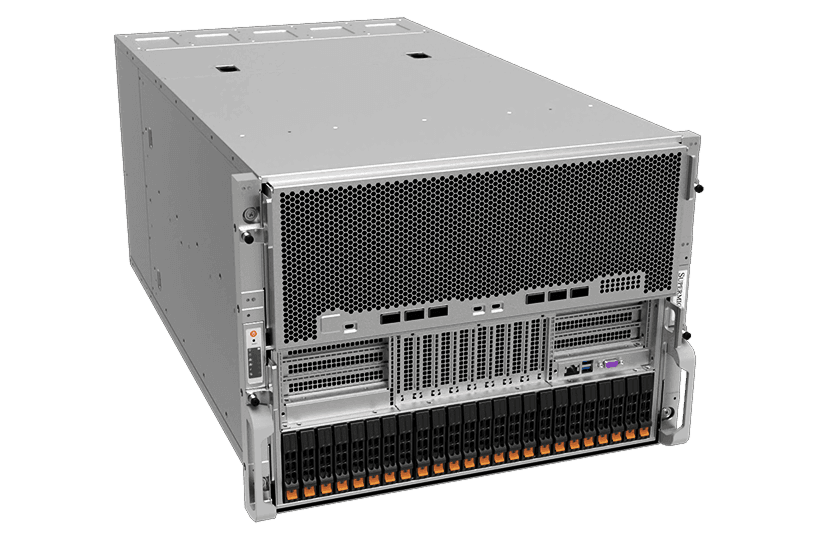

Supermicro Gaudi®2 AI Training Server

Building on the success of the original Supermicro Gaudi AI training system, the Gaudi 2 AI server prioritizes two key considerations: integrating AI accelerators with built-in high-speed networking modules to drive operation efficiency for training state-of-the-art AI models and bringing the AI industry the choice it needs.

- GPU: 8 Gaudi2 HL-225H mezzanine cards

- CPU: Dual 3rd Gen Intel® Xeon® Scalable processors

- Memory: 32 DIMMs - up to 8TB registered ECC DDR4-3200MHz SDRAM

- Drives: up to 24 hot-swap drives (SATA/NVMe/SAS)

- Power: 6x 3000W High efficiency (54V+12V) fully-redundant power supplies

- Networking: 24x 100GbE (48 x 56Gb) PAM4 SerDes Links by 6 QSFP-DDs

- Expansion Slots: 2x PCIe 4.0 switches

- Workloads: AI Training and Inference

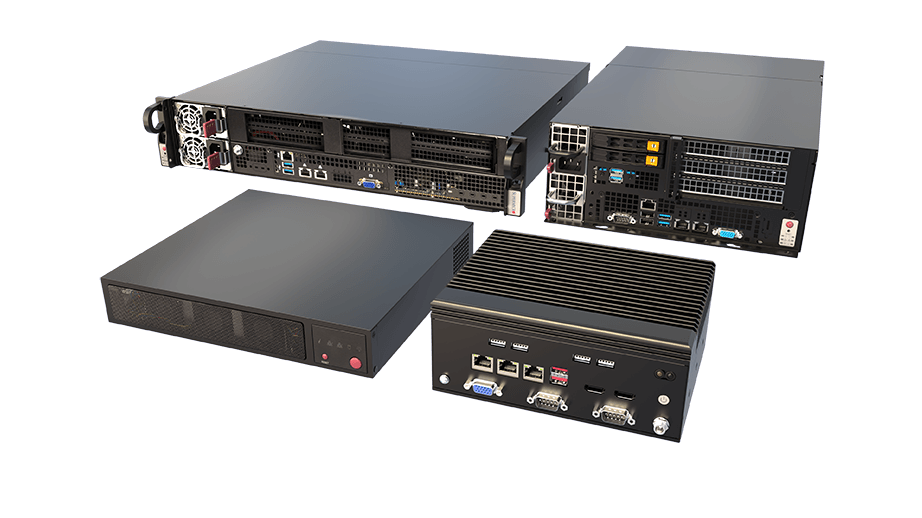

AI Performance and Efficiency at the Edge – Powered by Intel

Unlock powerful, power-efficient edge AI with purpose-built system solutions from Intel and Supermicro. Supermicro’s portfolio features a broad section of advanced CPUs and GPUs to deliver high-performance, low-latency AI results in space-constrained and remote environments, helping businesses scale data-driven applications with confidence.

- Optimize performance with integrated CPU, GPU, and NPU architectures

- Run AI inference faster with OpenVINO

- Deploy reliable, energy-efficient AI across industries like retail, healthcare, and manufacturing

Supermicro and Intel® Xeon® with Gaudi® 3 Show Remarkable Performance

Read the White PaperSupermicro Server With the Intel® Gaudi® 3 Is Optimized for Real-World AI Scenarios

Read the Product BriefSupermicro X14 Intel® Gaudi® AI Accelerator Cluster Reference Design

Read the Product BriefSupermicro with GAUDI 3 AI Delivers Scalable Performance for AI Requirements

Read the White PaperSupermicro and Intel GAUDI 3 Systems Advance Enterprise AI Infrastructure

Read the Product BriefSupermicro X13 Hyper Empowers Enterprise AI Workloads on the VMWARE Platform

Read the Solution BriefAccelerating AI Compute With Supermicro Servers In The INTEL® Developer Cloud

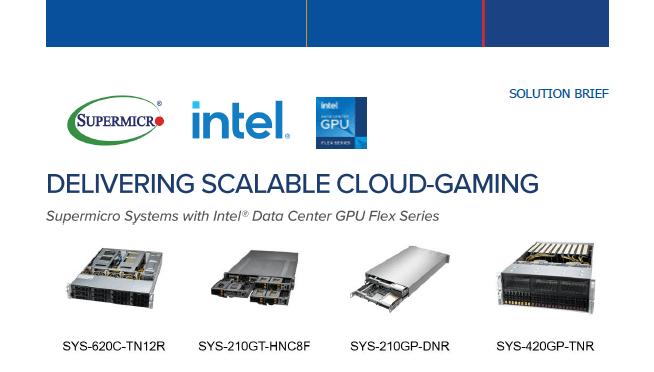

Read the Success StorySuperior Media Processing and Delivery Solution Based On Supermicro Servers W/ Intel® Data Center GPU Flex Series

Read the Solution Brief