What is Edge Computing?

Edge computing is a decentralized computing model in which data processing occurs at or near the point of origin, rather than being transmitted to a centralized data center or cloud environment. This approach contrasts with traditional computing architectures that rely heavily on centralized processing, often located far from the devices generating the data.

In an edge computing framework, data analysis and system responsiveness take place much closer to where the data is generated, whether it is gathered from IoT systems, the IoT edge sensors, or industrial control systems. This local approach to computing enables systems to operate with greater autonomy, allowing them to interpret and act on data without relying on constant communication with a central cloud or data center.

Edge computing is closely associated with the concept of the intelligent edge , where devices at the edge of the network process and analyse data in real-time, supporting smarter and faster decision-making. These applications often fall under the umbrella of IoT applications , which are designed to take advantage of localised computing for improved responsiveness.

The rise of edge computing reflects the growing need to manage the massive influx of data generated by distributed devices in real time. As digital environments become increasingly complex and geographically dispersed, conventional centralized architectures often struggle to meet performance and scalability demands. Edge computing addresses this challenge by distributing compute capacity across various points in the network, which supports faster insights and more adaptive system behavior.

This decentralized model represents a fundamental shift in how organizations build and deploy modern applications. Rather than funneling all processing tasks to a central location, edge computing empowers localized operations and supports scalable, resilient infrastructure across industries, from manufacturing and logistics to healthcare and smart cities - often using intermediary systems like IoT gateways to connect edge devices to broader networks.

How Edge Computing Works

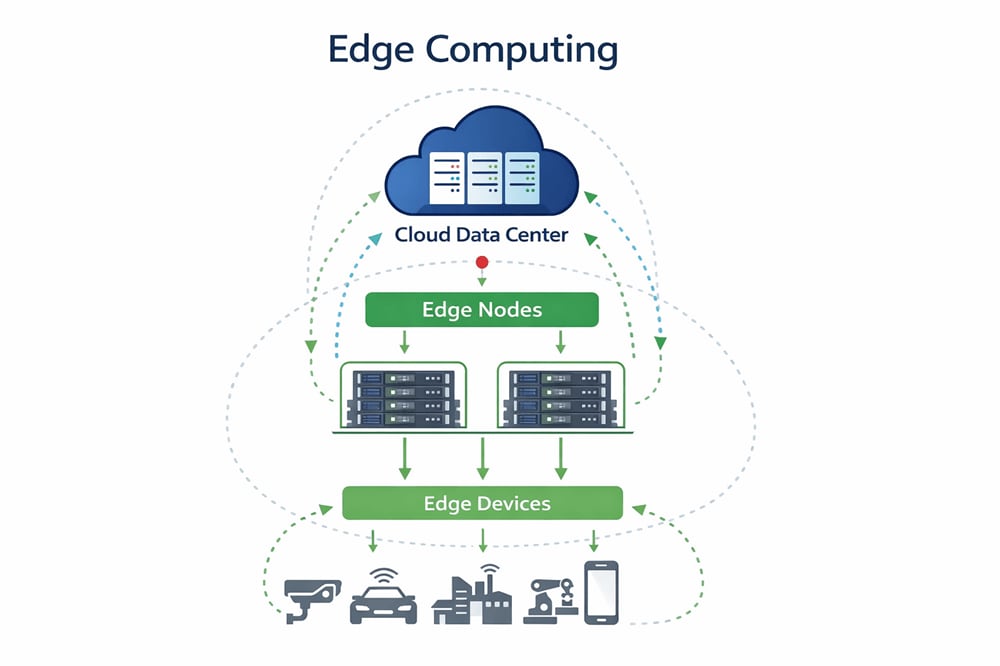

Edge computing functions by relocating key computing tasks, such as data analysis and processing, from centralized data centers to distributed locations that are physically closer to where the data is generated. This shift is not merely about geography but about restructuring the architecture to support time-sensitive operations, reduce network dependency, and enable real-time decision-making at the source. Edge environments typically consist of a layered system that includes edge devices, localized computing nodes, and networking components that coordinate with central systems as needed.

Advanced embedded server solutions play a crucial role in the realm of edge computing. These servers are engineered to be energy-efficient while delivering robust performance, catering to the demanding needs of edge computing tasks. Emphasizing a commitment to green computing , these solutions aim to minimize environmental impact. They achieve this by reducing the carbon footprint while maximizing operational efficiency.

Equally important, these server solutions are designed to operate reliably under challenging environmental conditions. This ensures consistent performance across a variety of settings, including those with extreme temperatures or other demanding operational requirements. The versatility and resilience of these servers make them ideal for a wide range of edge computing applications.

Edge computing systems are also typically designed with enhanced security in mind, given that they often process sensitive data outside traditional IT perimeters. Localized security controls, encryption, and system hardening are integral to ensuring the integrity and privacy of data processed at the edge.

By deploying compute power closer to where data is produced, edge computing enables faster processing times, reduces strain on network bandwidth, and enhances the responsiveness of digital services and devices.

Related Products & Solutions

Related Resources

Edge vs. Cloud vs. Fog Computing

While edge, cloud, and fog computing all relate to how and where data is processed, each represents a distinct approach to computing architecture with different applications and performance characteristics.

Cloud computing relies on centralized data centers, often located far from the point of data generation. In this model, data is transmitted over a network (typically the internet) to be processed, stored, and managed by cloud service providers. This approach offers scalability and central control but can introduce latency and bandwidth limitations, particularly for real-time or high-volume applications.

By contrast, edge computing processes data locally or near its source. This model reduces the distance data must travel and minimizes latency by analyzing information directly on the device or on a nearby edge node. It is well-suited for use cases requiring immediate insights or action, such as autonomous systems, industrial automation, or on-site video analytics.

Fog computing acts as an intermediary between edge and cloud environments. It extends cloud capabilities closer to the edge by introducing a layer of distributed computing that operates between local devices and centralized cloud infrastructure . Fog computing helps manage tasks that are too resource-intensive for edge devices but too latency-sensitive for cloud-only processing.

In essence, cloud computing is centralized, edge computing is fully decentralized, and fog computing offers a hybrid approach. Each model has its place depending on the specific requirements for speed, bandwidth, data sovereignty, and processing power.

Key Benefits of Edge Computing

Edge computing offers several strategic and operational advantages that make it a compelling architecture for modern data-intensive applications.

One of the most significant benefits is reduced latency. By processing data directly at or near the source, edge computing eliminates the need to transmit information over long distances to centralized systems. This dramatically shortens response times, which is critical for real-time applications such as autonomous vehicles, augmented reality, industrial automation, and remote diagnostics in telemedicine.

Another key advantage is bandwidth efficiency. Localized data processing allows systems to filter, analyze, and act on data before transmitting only essential information to centralized cloud platforms. This minimizes the volume of data being sent over the network, reducing bandwidth usage and associated costs, particularly valuable in environments with limited or expensive connectivity.

Enhanced security and data privacy are also inherent to edge computing. Processing data on-site or within local infrastructure reduces the exposure of sensitive information during transmission. This can lower the risk of interception or unauthorized access, especially in industries with stringent regulatory requirements, such as healthcare, finance, and critical infrastructure.

Finally, edge computing contributes to greater system reliability. Because edge devices and nodes can operate independently of the central cloud, they can continue functioning during network disruptions or outages. This localized resilience ensures continuity of service, even when connectivity to the central infrastructure is temporarily lost.

These combined benefits make edge computing a powerful approach for organizations aiming to increase performance, reduce operational risks, and better support distributed environments.

Use Cases and Applications

Edge computing enables on-site or near-site processing, which has become critical for industries requiring rapid decision-making and localized control. Its ability to bring compute power closer to the source of data generation has opened new opportunities for innovation, especially in environments where latency, reliability, and responsiveness are critical.

In manufacturing, edge computing enables predictive maintenance, real-time quality control, and production optimization by analyzing sensor data directly on the factory floor. Healthcare systems use edge capabilities to support remote diagnostics, patient monitoring, and medical imaging in environments where low latency can be vital. In retail, edge infrastructure supports intelligent checkout systems, personalized customer experiences, and efficient inventory management by processing data locally in stores.

Autonomous vehicles rely heavily on edge computing to interpret sensor data, make driving decisions, and communicate with nearby infrastructure. This all happens in real time and without depending on constant cloud connectivity. Similarly, smart city initiatives utilize edge technologies to manage traffic systems, monitor public safety infrastructure, and optimize energy usage at a local level.

Edge computing is also closely tied to the expansion of IoT edge solutions , which involve processing data on or near connected devices. While these applications are diverse and growing, their technical distinctions are covered in greater depth in a dedicated glossary page on IoT edge.

As a core enabler of distributed computing , edge architecture allows organizations to extend their IT capabilities into the physical world, supporting faster decision-making, more resilient systems, and scalable deployment models. From industrial automation to connected healthcare and intelligent transportation systems, edge computing plays a crucial role in building fast, efficient, and adaptable digital ecosystems across modern enterprises.

Challenges and Considerations

While edge computing offers clear advantages in speed, scalability, and efficiency, it also introduces a set of unique challenges that organizations must address to ensure successful deployment and operation.

One of the primary considerations is management complexity. With computing resources distributed across multiple edge locations, maintaining consistent performance, security, and configuration standards can become increasingly difficult. This is especially so if they’re situated in remote or physically constrained environments. To overcome this, IT teams must manage a diverse array of hardware, software, and networking components across decentralized sites.

Security and data protection are also critical concerns. Although processing data locally can reduce exposure during transmission, edge devices and nodes may be more physically accessible or operate outside traditional enterprise security perimeters. This increases the need for robust endpoint protection, secure boot processes, and real-time monitoring to guard against unauthorized access or tampering.

Interoperability and standardization pose another challenge. Edge environments often involve a wide variety of devices, platforms, and protocols. Ensuring compatibility across these components, especially in multi-vendor or legacy environments, can impact both integration efforts and long-term scalability.

In addition, infrastructure costs can be significant. While edge computing reduces the burden on centralized data centers, deploying and maintaining edge hardware at scale requires investment in ruggedized systems, reliable power sources, and secure connectivity. The return on investment depends heavily on use case, deployment scale, and operational strategy.

Finally, organizations must consider the data lifecycle at the edge. Decisions about which data to process locally, which to discard, and which to send to the cloud for long-term storage or analytics require careful planning and policy enforcement to balance performance with regulatory and business requirements.

Key Terms in Edge Computing

Understanding the core components of edge computing is essential to grasp how distributed architectures function. Below are several important terms commonly associated with edge environments:

Edge Node

An edge node is a localized computing endpoint that processes or relays data generated by nearby devices. It typically serves as the first processing layer in the edge computing hierarchy, enabling real-time data filtering or decision-making closer to the source.

Gateway

A gateway functions as a bridge between edge devices and central networks or systems. It manages data traffic, handles protocol translation, and often performs basic processing or security tasks before forwarding data upstream or downstream.

Micro Data Center

Micro data centers are compact, self-contained facilities that provide compute, storage, and networking resources near the point of use. They support specific applications or localized workloads, reducing the need to send data to distant data centers.

Edge Device

An edge device is any endpoint, such as a sensor, camera, or industrial controller, that generates or consumes data within an edge computing environment. These devices often include limited processing capability to enable real-time responses.

Edge Orchestrator

An edge orchestrator is a software layer or platform that manages, deploys, and monitors workloads across multiple edge nodes. It enables centralized control of decentralized infrastructure, helping maintain consistency and scalability.

Latency

In edge computing, latency refers to the delay between the moment data is generated and the moment it is processed or acted upon. Reducing latency is one of the main goals of placing compute resources closer to the data source.

Real-Time Processing

This term refers to the ability of a system to ingest, analyze, and act on data within milliseconds. Edge computing supports real-time processing by minimizing transmission delays and enabling immediate local computation.

FAQs

- What's the difference between edge computing and cloud computing?

While both edge and cloud computing involve data storage and processing, the primary difference lies in location. Cloud computing centralizes processing in large data centers, often located far from end users. In contrast, edge computing processes data closer to where it is generated. - How does edge computing enhance IoT?

Edge computing complements IoT by enabling devices to process and analyze data locally, rather than sending it to a central cloud for processing. This allows for quicker decision-making, a key advantage for time-sensitive applications such as industrial automation, smart cities, or autonomous systems. - Is edge computing more secure than cloud computing?

Edge computing can improve data privacy and security by limiting how far sensitive data must travel, reducing exposure during transmission. However, it also introduces new security challenges, such as managing a large number of distributed endpoints. Both edge and cloud environments require comprehensive, context-specific security strategies. - Why is edge computing important for 5G?

Edge computing is essential for 5G networks because it helps to lessen latency. Since 5G enables faster data transmission, edge infrastructure ensures that processing can keep pace, especially for mobile and bandwidth-intensive applications. - What are examples of edge computing in real life?

Real-world examples of edge computing include autonomous vehicles processing sensor data in real time, retail stores using in-store analytics for customer behavior, and industrial facilities deploying predictive maintenance systems on factory floors. These scenarios require immediate data processing without relying on distant data centers.