NVIDIA Blackwell Ultra , ab sofort lieferbar

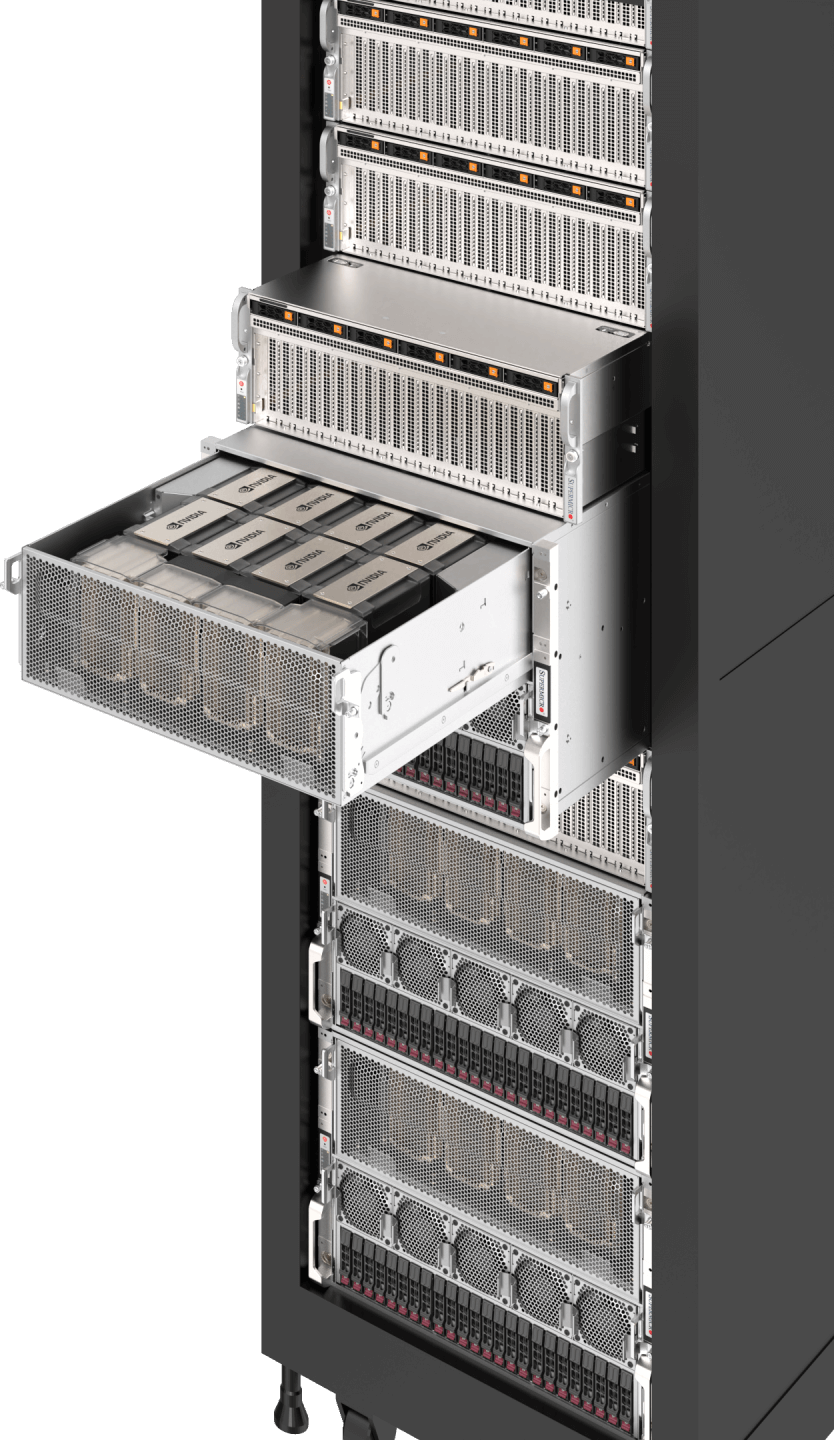

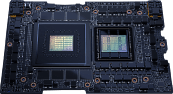

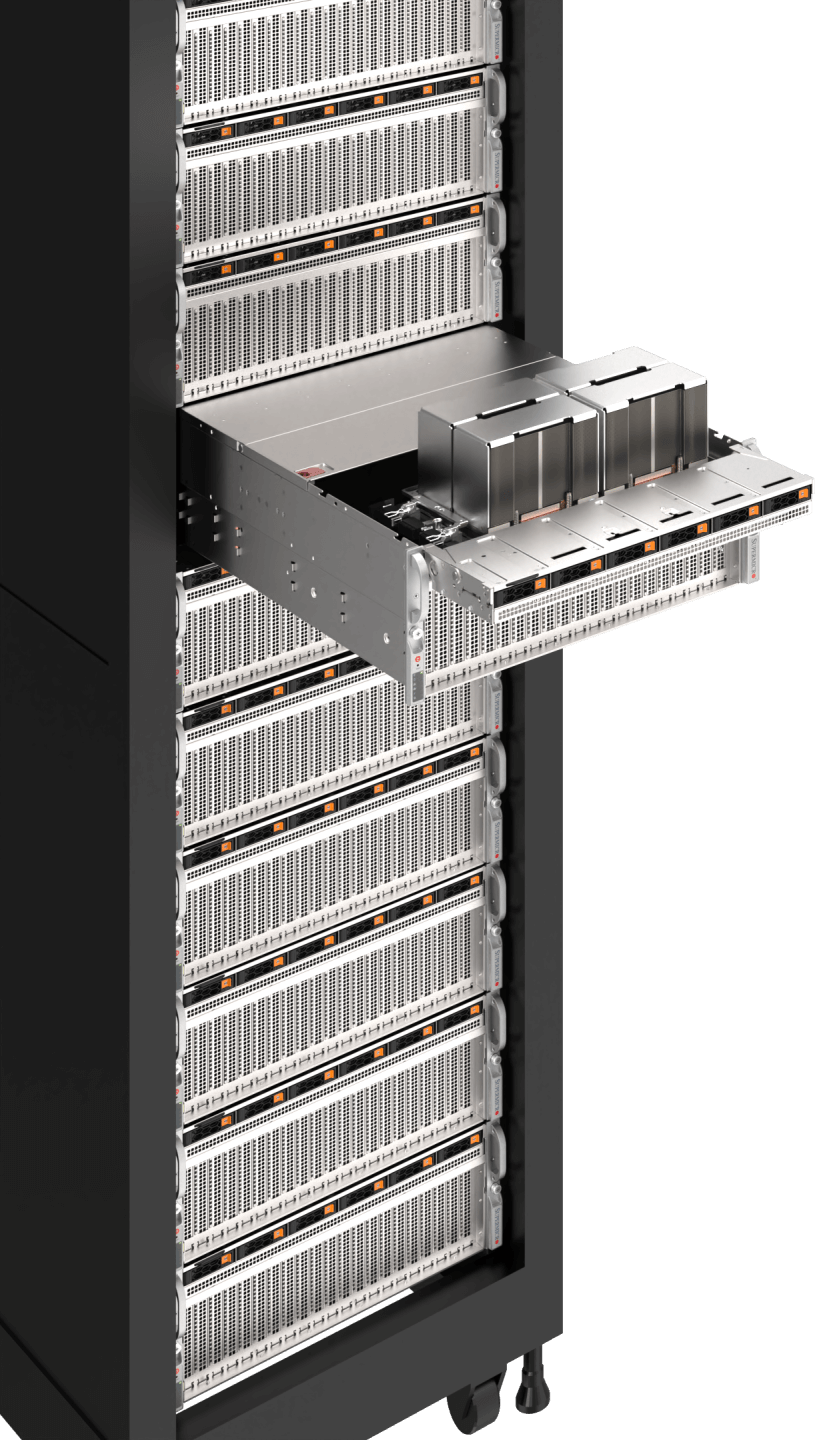

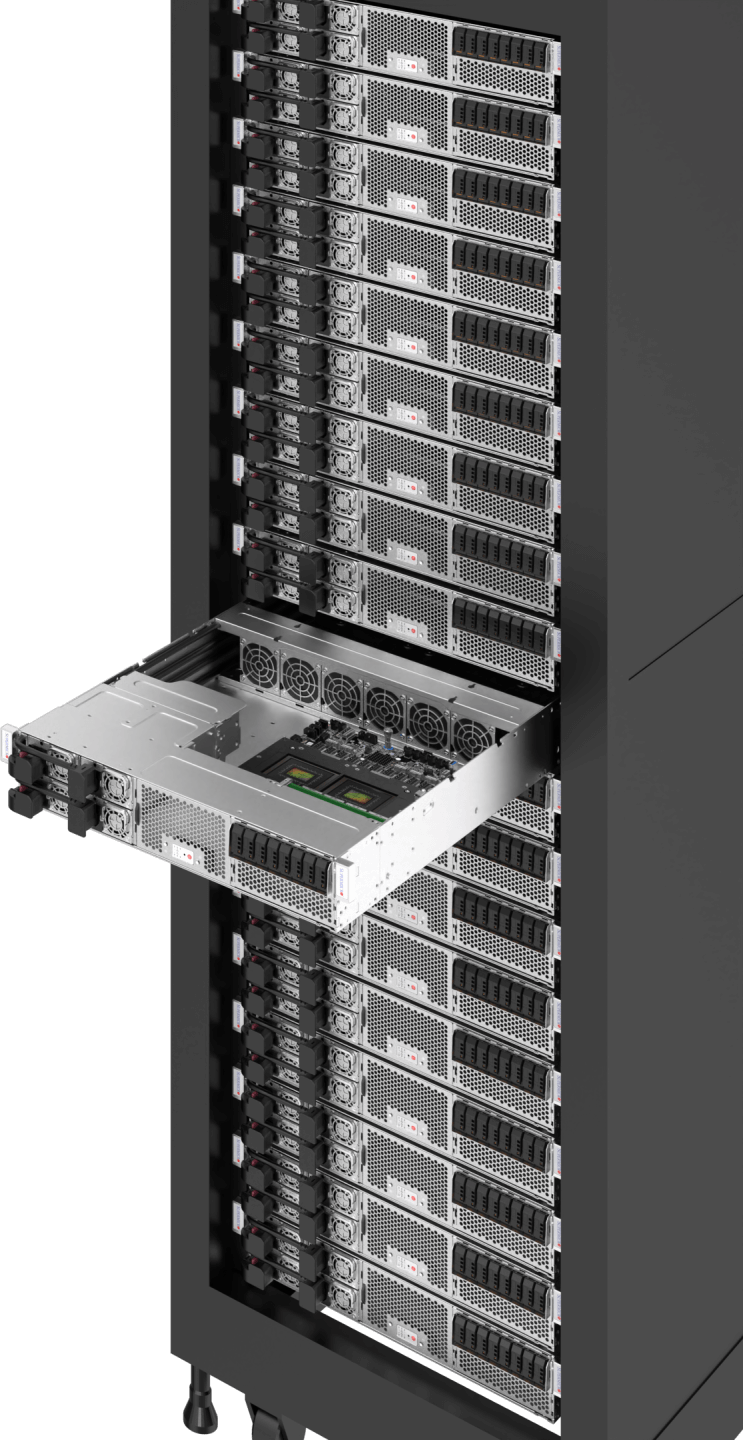

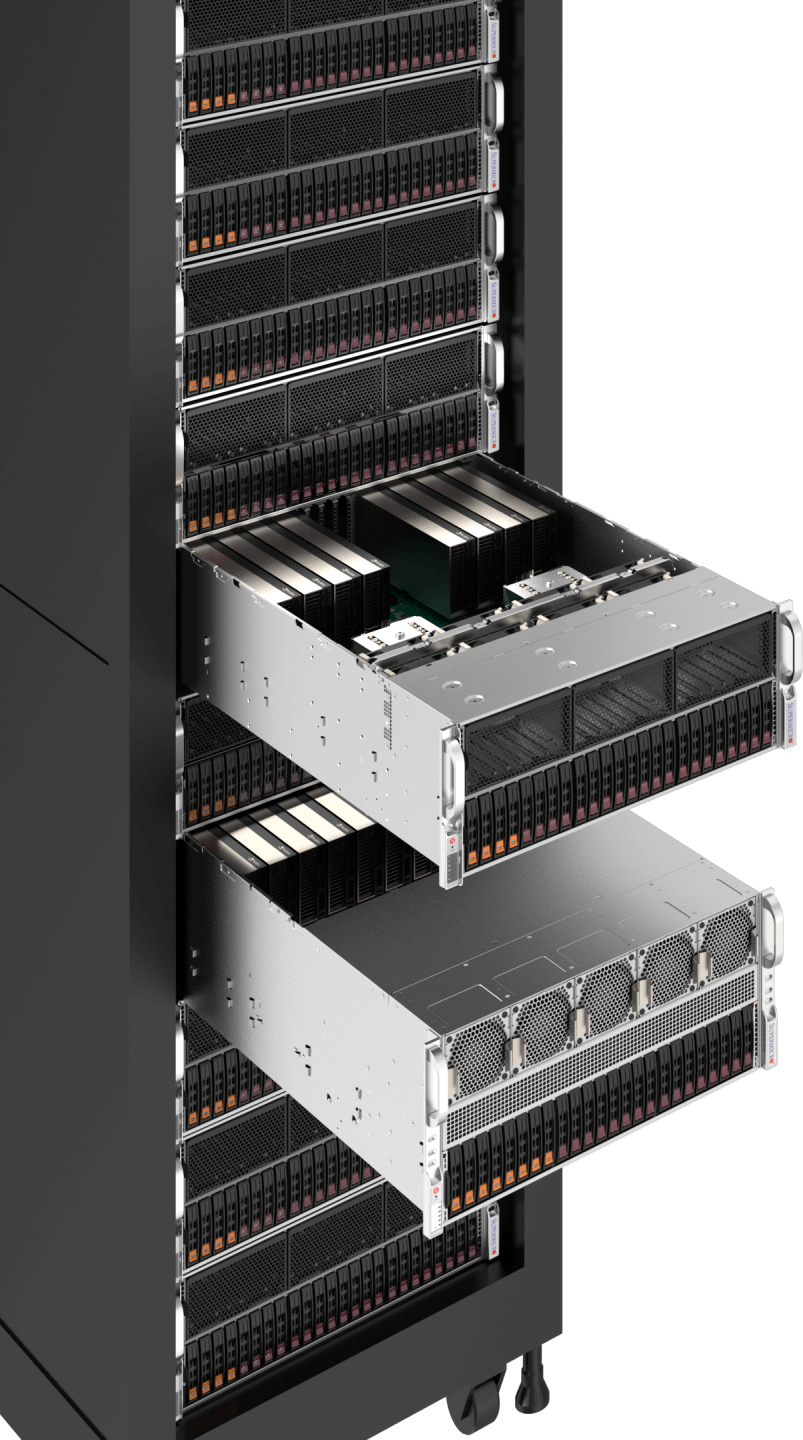

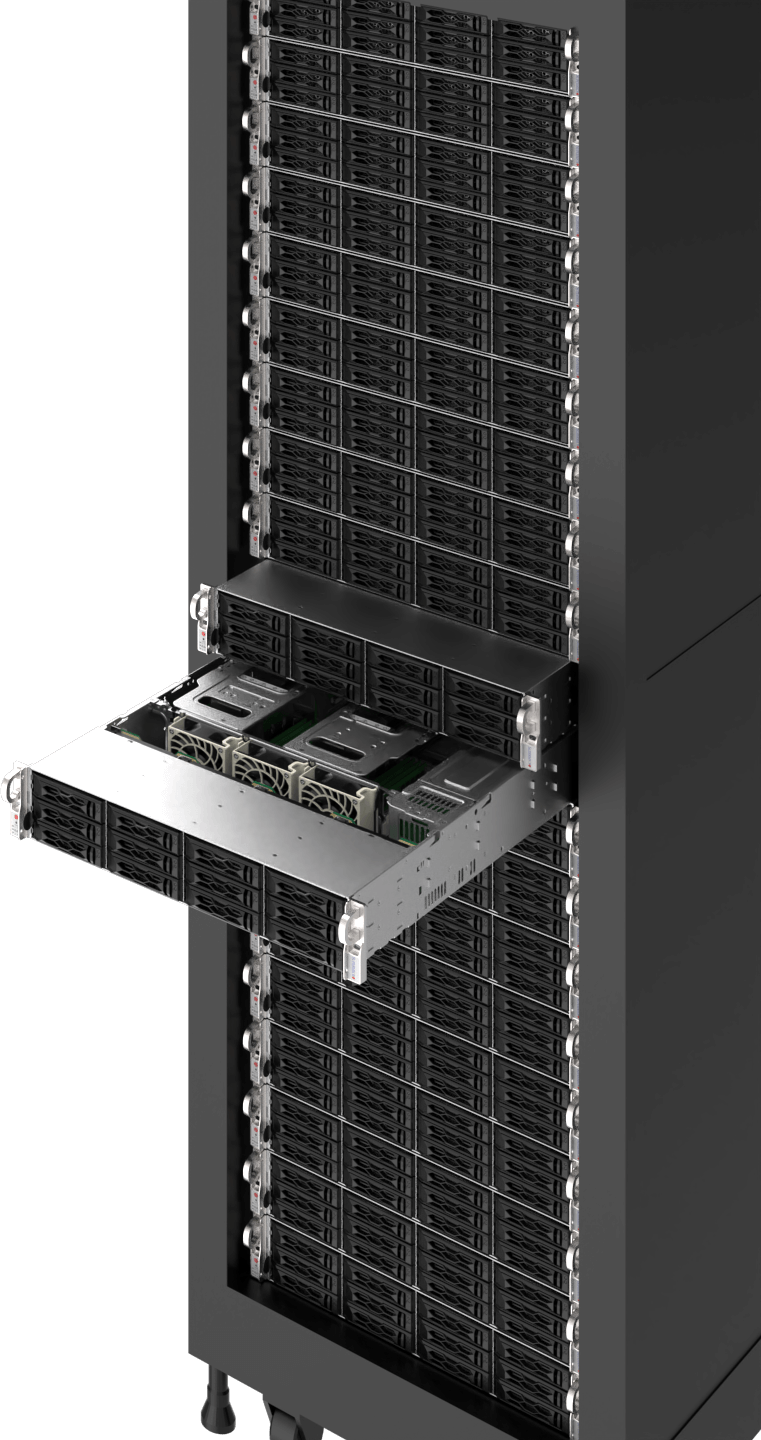

NVIDIA GB300 NVL72 SuperCluster

xAI Koloss

Erschließen Sie das volle Potenzial der KI mit den hochmodernen KI-fähigen Infrastrukturlösungen von Supermicro. Von großskaligem Training bis hin zu intelligentem Edge-Inferencing – unsere schlüsselfertigen Referenzdesigns optimieren und beschleunigen die KI-Bereitstellung. Stärken Sie Ihre Workloads mit optimaler Leistung und Skalierbarkeit, während Sie Kosten optimieren und die Umweltbelastung minimieren. Entdecken Sie eine Welt voller Möglichkeiten mit der vielfältigen Auswahl an KI-Workload-optimierten Lösungen von Supermicro und beschleunigen Sie jeden Aspekt Ihres Geschäfts.

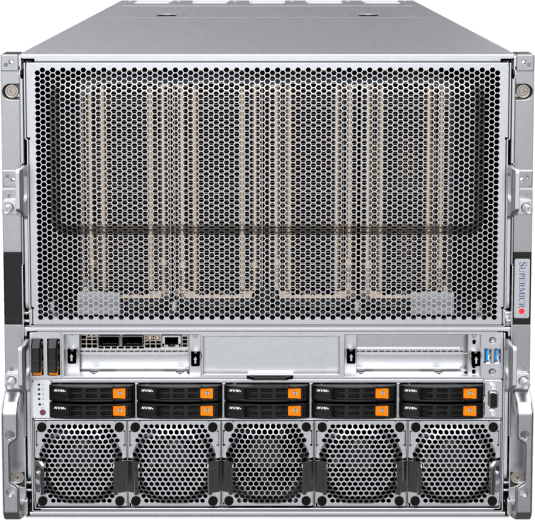

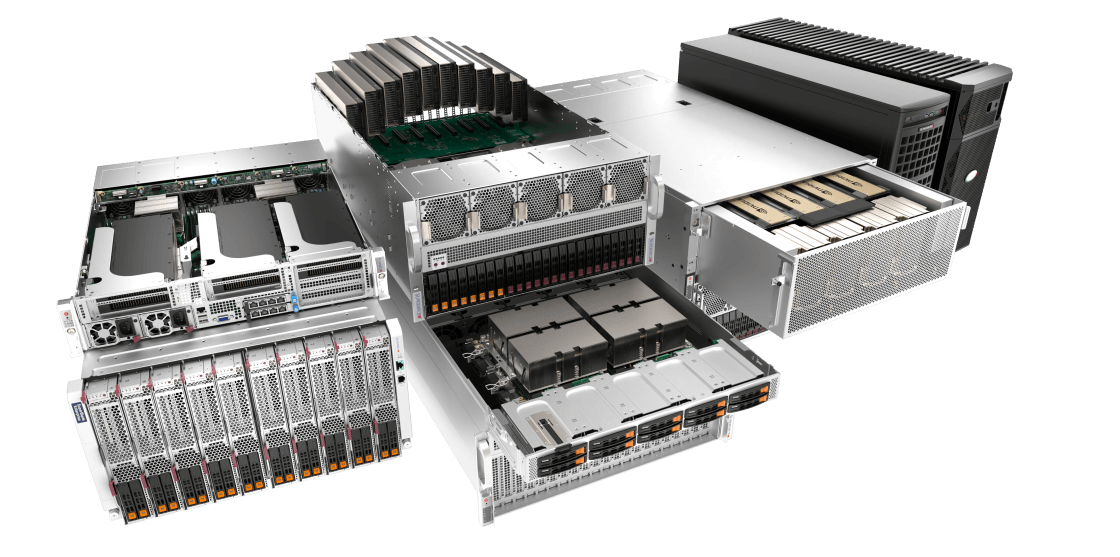

KI-Training und Inferenz in großem Maßstab

Große Sprachmodelle, generatives KI , autonomes Fahren, Robotik

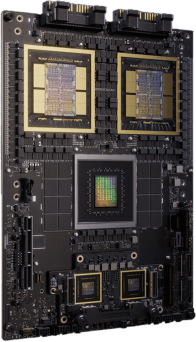

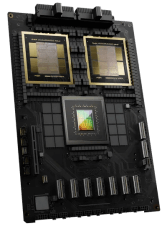

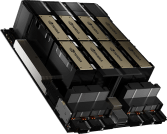

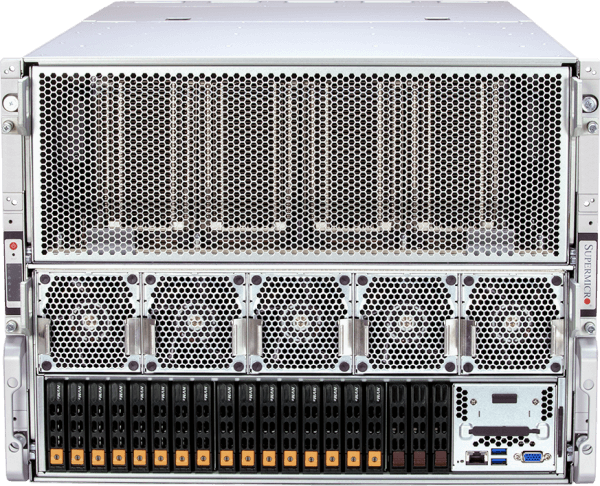

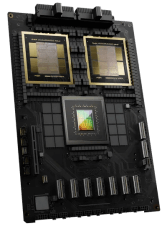

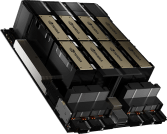

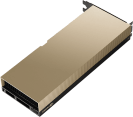

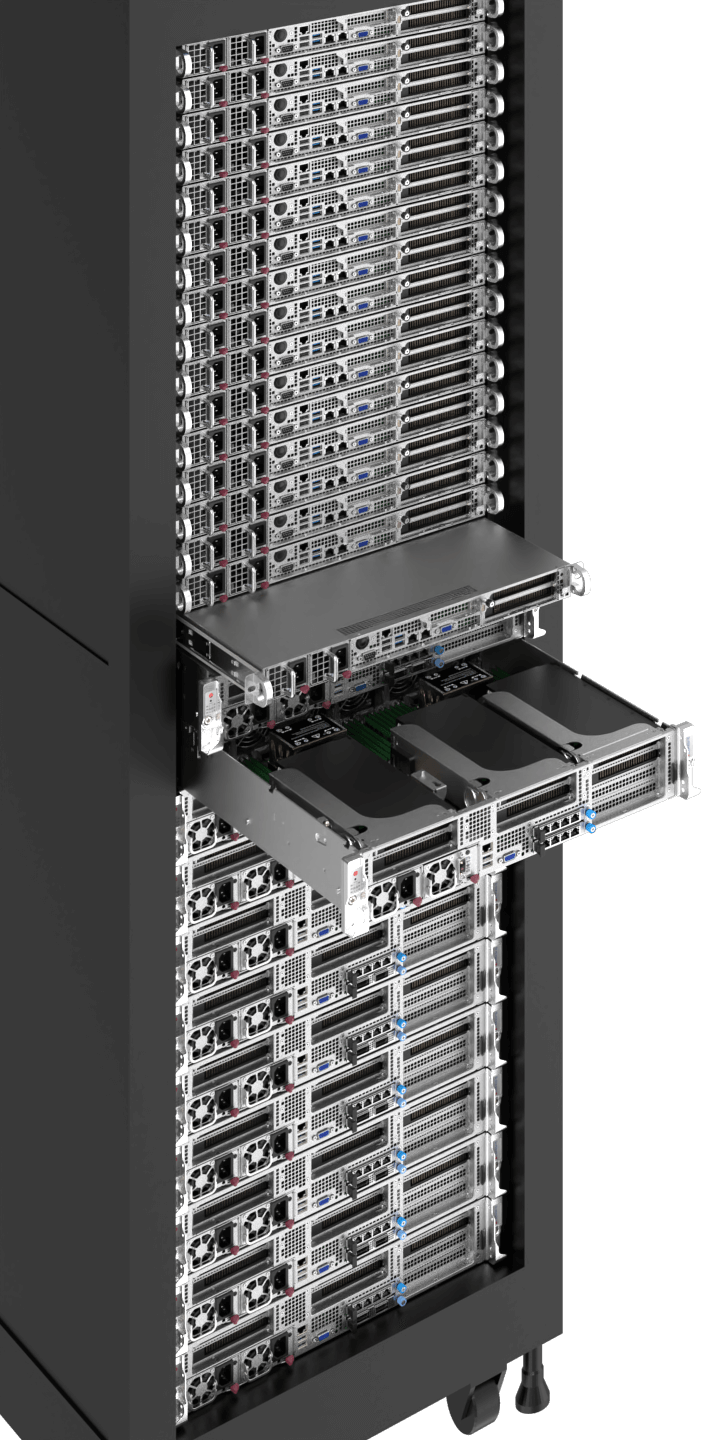

KI großer KI erfordert modernste Technologien, um die parallele Rechenleistung von GPUs optimal zu nutzen und so Milliarden, wenn nicht sogar Billionen von KI zu verarbeiten, die mit riesigen Datensätzen trainiert werden müssen. Durch den Einsatz von NVIDIAs HGX™ , GB300/GB200 NVL72 sowie den schnellsten NVLink®- und NVSwitch®-GPU-GPU-Verbindungen mit einer Bandbreite von bis zu 1,8 TB/s und dem schnellsten 1:1-Netzwerk für jede GPU für das Node-Clustering sind diese Systeme darauf optimiert, große Sprachmodelle von Grund auf zu trainieren und sie Millionen von gleichzeitigen Nutzern bereitzustellen. Supermicro vervollständigt den Stack mit NVMe eine schnelle KI und Supermicro vollständig integrierte Racks mit Flüssigkeitskühlungsoptionen, um eine schnelle Bereitstellung und ein reibungsloses KI zu gewährleisten.

Workload-Größen

- Extra groß

- Groß

- Mittel

- Lagerung

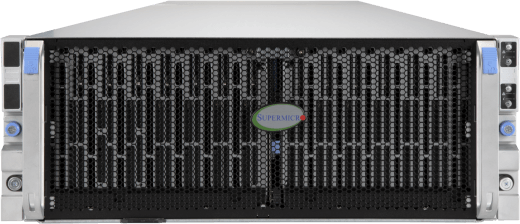

Flüssigkeitsgekühlte NVIDIA HGX B300/B200-Systeme und -Racks

NVIDIA GB300 NVL72 mit Supermicro

NVIDIA GB200 NVL72 mit Supermicro Flüssigkeitskühlung

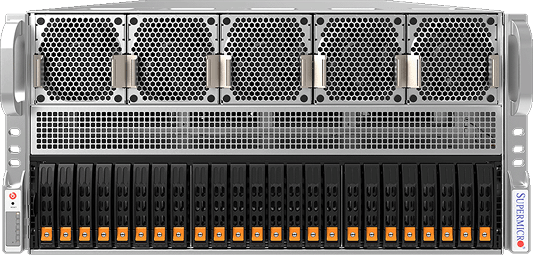

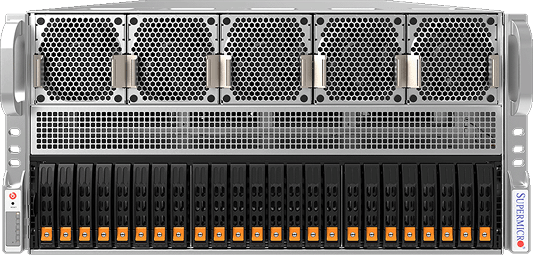

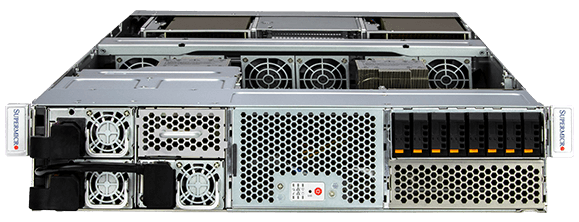

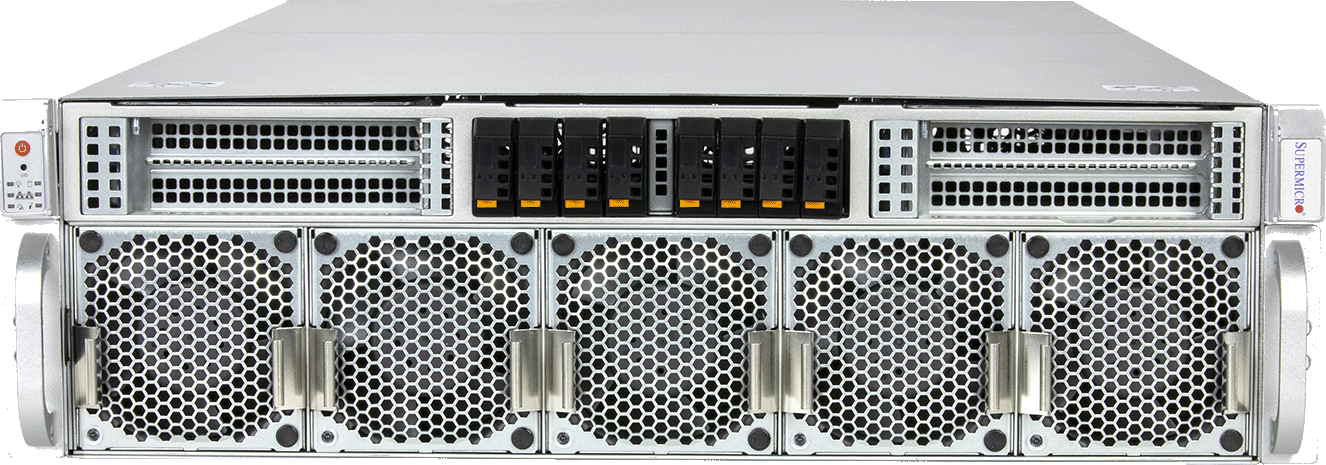

Luftgekühlte NVIDIA HGX B300/B200-Systeme und -Racks

8U-System mit NVIDIA HGX H200 mit 8 GPUs

NVMe im Petabyte-Bereich

Festplattenspeicher im Petabyte-Bereich

Ressourcen

KI

Technische Simulation, Wissenschaftliche Forschung, Genomische Sequenzierung, Entdeckung von Medikamenten

Immer mehr HPC-Workloads nutzen Algorithmen des maschinellen Lernens und GPU-beschleunigtes paralleles Rechnen, um schnellere Ergebnisse zu erzielen und die Zeit bis zur Entdeckung für Wissenschaftler, Forscher und Ingenieure zu verkürzen. Viele der schnellsten Supercomputing-Cluster der Welt nutzen jetzt die Vorteile von GPUs und der Leistung von KI.

HPC-Workloads erfordern typischerweise datenintensive Simulationen und Analysen mit massiven Datensätzen und hohen Präzisionsanforderungen. GPUs wie NVIDIAs H100/H200 bieten eine beispiellose Double-Precision-Leistung von 60 Teraflops pro GPU, und die hochflexiblen HPC-Plattformen von Supermicro ermöglichen hohe GPU- und CPU-Anzahlen in einer Vielzahl dichter Formfaktoren mit Rack-Scale-Integration und Flüssigkeitskühlung.

Workload-Größen

- Groß

- Mittel

8U/10-System mit NVIDIA HGX B200 mit 8 GPUs

NVIDIA GB200 NVL4

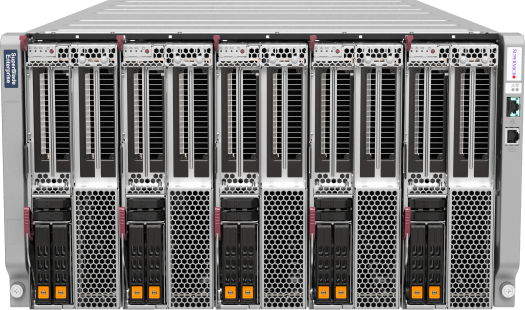

6U/8USuperBlade®

3U/4U/5U, 8–10 GPUs, PCIe

1U Grace Hopper System

Ressourcen

KI-Inferenz und Schulung für Unternehmen

Generative KI , KI Dienste/Applikationen, Chatbots, Empfehlungssysteme, Geschäftsautomatisierung

Der Aufstieg der generativen KI wurde als die nächste Grenze für verschiedene Branchen erkannt, von der Technologie bis zum Bankwesen und den Medien. Der Wettlauf um die Einführung von KI hat begonnen, um Innovationen zu fördern, die Produktivität deutlich zu steigern, Abläufe zu rationalisieren, datengestützte Entscheidungen zu treffen und das Kundenerlebnis zu verbessern.

Ob KI-gestützte Anwendungen und Geschäftsmodelle, intelligente, menschenähnliche Chatbots für den Kundenservice oder KI zur Unterstützung bei der Codegenerierung und Inhaltserstellung – Unternehmen können offene Frameworks, Bibliotheken, vortrainierte KI-Modelle nutzen und diese für einzigartige Anwendungsfälle mit ihren eigenen Datensätzen feinabstimmen. Während Unternehmen KI-Infrastrukturen einführen, bietet Supermicros Vielfalt an GPU-optimierten Systemen eine offene modulare Architektur, Herstellerflexibilität sowie einfache Bereitstellungs- und Upgrade-Pfade für sich schnell entwickelnde Technologien.

Workload-Größen

- Extra groß

- Groß

- Mittel

3U/4U/5U, 8–10 GPUs, PCIe

6U SuperBlade®

2U MGX-System

2U Grace MGX-System

Ressourcen

Visualisierung & Gestaltung

Echtzeit-Zusammenarbeit, 3D-Design, Spieleentwicklung

Die höhere Wiedergabetreue von 3D-Grafiken und KI Anwendungen durch moderne Grafikprozessoren beschleunigt die industrielle Digitalisierung und verändert Produktentwicklungs- und Designprozesse, die Fertigung und die Erstellung von Inhalten durch realitätsgetreue 3D-Simulationen, um eine neue Qualität, unendliche Iterationen ohne Opportunitätskosten und eine kürzere Markteinführungszeit zu erreichen.

Errichten Sie virtuelle Produktionsinfrastrukturen im großen Maßstab, um die industrielle Digitalisierung durch Supermicros vollintegrierte Lösungen zu beschleunigen, einschließlich der 4U/5U 8-10 GPU-Systeme, einer NVIDIA OVX™ Referenzarchitektur, optimiert für NVIDIA Omniverse Enterprise mit Universal Scene Description (USD) Konnektoren, sowie NVIDIA-zertifizierten Rackmount-Servern und Multi-GPU-Workstations.

Workload-Größen

- Groß

- Mittel

Ressourcen

Bereitstellung von Inhalten & Virtualisierung

Content Delivery Networks (CDNs), Transkodierung, Komprimierung, Cloud Gaming/Streaming

Videoübertragungen machen nach wie vor einen erheblichen Teil des heutigen Internetverkehrs aus. Da Anbieter von Streaming-Diensten zunehmend Inhalte in 4K und sogar 8K oder Cloud-Gaming mit höherer Bildwiederholrate anbieten, ist die GPU-Beschleunigung mit Medien-Engines ein Muss, um die mehrfache Durchsatzleistung für Streaming-Pipelines zu ermöglichen und gleichzeitig die erforderliche Datenmenge mit besserer visueller Wiedergabetreue zu reduzieren, dank neuester Technologien wie AV1-Codierung und -Decodierung.

Die Multi-Node- und Multi-GPU-Systeme Supermicro, wie beispielsweise das 2U-4-Node-BigTwin®- System, erfüllen die strengen Anforderungen der modernen Videoübertragung. Jeder Knoten unterstützt die NVIDIA L4-GPU und bietet reichlich PCIe Speicher sowie hohe Netzwerkgeschwindigkeiten, um die anspruchsvolle Datenpipeline für Content-Delivery-Netzwerke zu bewältigen.

Workload-Größen

- Groß

- Mittel

- Klein

Ressourcen

Rand KI

Edge Video Transcoding, Edge Inference, Edge Training

Branchenübergreifend investieren Unternehmen, deren Mitarbeiter und Kunden an Edge-Standorten arbeiten - in Städten, Fabriken, Einzelhandelsgeschäften, Krankenhäusern und vielen anderen - zunehmend in den Einsatz von KI am Edge. Durch die Verarbeitung von Daten und die Nutzung von KI und ML-Algorithmen am Edge überwinden Unternehmen Bandbreiten- und Latenzbeschränkungen und ermöglichen Echtzeitanalysen für eine zeitnahe Entscheidungsfindung, eine vorausschauende Betreuung und personalisierte Dienste sowie optimierte Geschäftsabläufe.

Zweckgebundene, umgebungsoptimierte Supermicro Edge KI-Server mit verschiedenen kompakten Formfaktoren liefern die erforderliche Leistung für eine latenzarme, offene Architektur mit vorintegrierten Komponenten, vielfältiger Hardware- und Software-Stack-Kompatibilität sowie Datenschutz- und Sicherheitsfunktionen, die für komplexe Edge-Bereitstellungen sofort einsatzbereit sind.

Workload-Größen

- Extra groß

- Groß

- Mittel

- Klein

Hyper

Kompakt

Multi-GPU-Edge-Server mit geringer Tiefe

Lüfterlos

Ressourcen

Breitestes Portfolio an KI Systemen

Skalierter Einsatz von NVIDIA Omniverse™

COMPUTEX 2024 CEO-Keynote